About Volumes

Microsoft Azure is available on demand. Please contact our support for more information

What is a volume?

Cloud storage providers come with their own interfaces, features and terminology. At a certain level, though, they all view resources as data objects organized in repositories. Authentication and operations are commonly defined on those objects and repositories, and while each cloud provider might call these things different names and apply different parameters to them, their basic behavior is the same.

Acccess to these repositories is mediated by the BioData Catalyst powered by Seven Bridges using volumes. A volume is associated with a particular cloud storage repository that you have enabled the Platform to read from (and, optionally, to write to). Currently, volumes may be created using the following types of cloud storage repository: Amazon Web Services (AWS) S3 buckets, Google Cloud Storage (GCS) buckets and Microsoft Azure storage containers.

A volume enables you to treat the cloud repository associated with it as external storage for Platform. You can 'import' files from the volume to thePlatform to use them as inputs for computation. Similarly, you can write files from the Platform to your cloud storage by exporting them to your volume. Please note that export to a volume is available only via the API (including API client libraries), and through the Seven Bridges CLI.

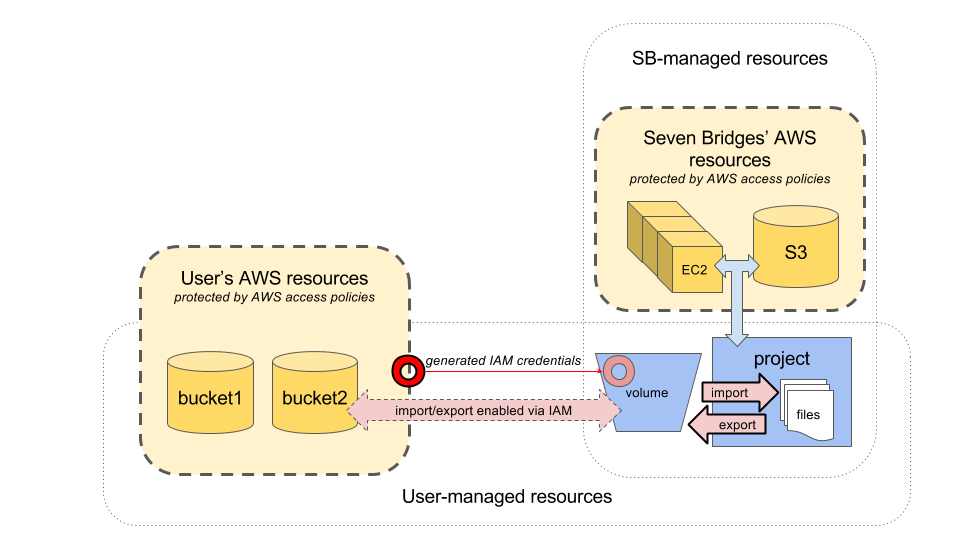

The following diagram shows the relationship between a user's AWS repositories, bucket1 and bucket2, and a volume, from which you can import and export the contents of the buckets to project on Platform.

An AWS S3 volume enables data import and export operations via a set of IAM credentials generated by the user.

What information should I provide?

To create a volume on thePlatform, you should provide the following information:

Volume name

A volume name consists of 3-32 letters of the English alphabet, numbers, and underscores (_). Each of your volumes must have a unique name.

Access mode

The access mode of a volume is either read-only (RO) or read-write (RW). Each volume will have its access mode set by default to read-only.

Read-only volumes can only be used to make files available to the Platform for reading; for example, by importing files into projects. Read-write volumes' contents can be modified by operations performed with the API. However, it is a good practice to avoid configuring your input buckets as read-write, as a safeguard against honest mistakes.

Cloud service configuration

To access the cloud storage provider on your behalf, thePlatform needs to know the type of storage provider it should talk to, and be configured to interface with it.

As each cloud storage provider supports slightly different features and authorization schemas, the configuration will be different for each storage type. Depending on your selected storage provider and your intended use of the volume, there might be additional properties you may want to set to further modify the way thePlatform accesses it.

For more details on each supported storage type and its corresponding configuration, see the following pages:

- Amazon Web Services' Simple Storage Service (AWS S3) Volumes

- Google Cloud Storage (GCS) Volumes

- Microsoft Azure Volumes

Prefix (optional)

A prefix is a string that can be set on a volume to refer to a specific location on a single bucket. This feature can be used to replicate the folder or grouping structure inside a bucket in your cloud storage account. As such, a prefix is useful if you have grouped files devoted to distinct projects within a single bucket.

For example, on Amazon S3, folders can also be assigned specific access permissions in the bucket policy itself, or an IAM user can be configured to have read-only or read-write access per each folder. When you assign your volume a prefix as well as a bucket, you limit the volume's operations within the specified folder on the bucket.

For example, if you set the prefix for a volume to "a10", and import a file with location set to "test.fastq" from the volume to the Platform, then the object that will be referred to by the newly-created alias will be "a10/test.fastq".

What can I do with a volume?

Once a volume has been configured, you can make its data objects available for computation on thePlatform, and copy files to the volume from the Platform.

Data from attached volumes can be used on the Platform both through the visual interface and the API. Exports to attached volumes are supported only to certain cloud providers and only via the API.

API operations on volumes

Updated about 1 year ago