Troubleshooting tutorial

BioData Catalyst powered by Seven Bridges

Troubleshooting Failed Tasks

Often the first step to a user becoming comfortable using BioData Catalyst powered by Seven Bridges is their gaining confidence in resolving issues they encounter on their own. This confidence usually comes with experience – the experience with bioinformatics tools and Linux environment in general, but also the experience with the platform features.

However, one of the reasons for developing the platform in the first place is to enable an additional level of abstraction between the users and low-level command line work in the terminal. Even though there are a number of platform features that help with tracking down the issues, the less-experienced users can still face challenges with troubleshooting because the whole process might assume familiarity digging through the tool and system messages.

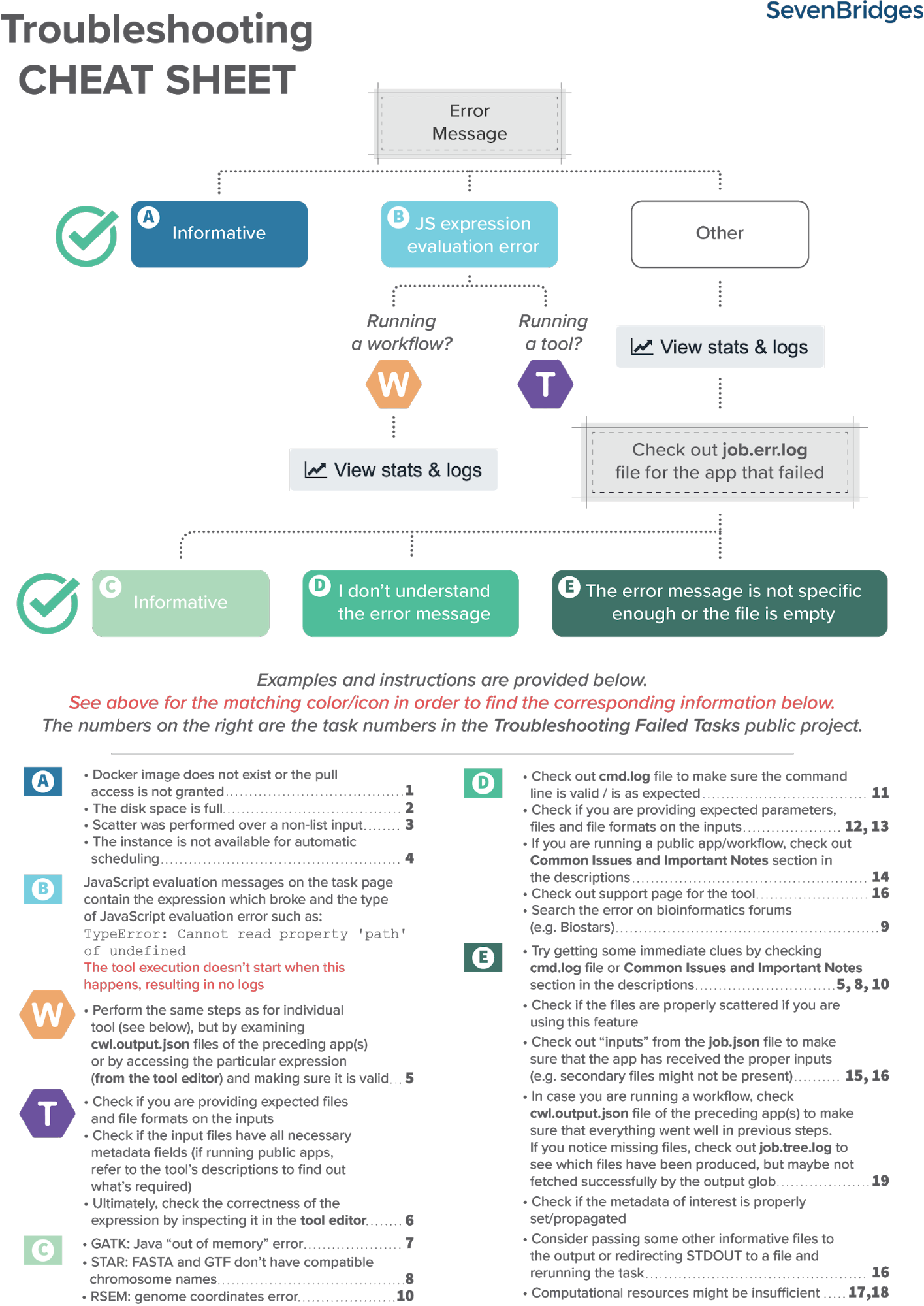

Fortunately, there is a set of steps that most often brings us to the solution. Based on internal knowledge and experience, the Seven Bridges team has come up with the Troubleshooting Cheat Sheet (Figure 1) which should help you navigate through the process of resolving the failed tasks.

Helpful terms to know

Tool / App (interchangeably used) – refers to a bioinformatics tool or its Common Workflow Language (CWL) wrapper that is created or already available on the platform.

Workflow / Pipeline (interchangeably used) – denotes a number of apps connected together in order to perform multiple analysis steps in one run.

Task – represents an execution of a particular app or workflow on the platform. Depending on what is being executed (app or workflow), a single task can consist of only one tool execution (app case) or multiple executions (one or more per each app in the workflow).

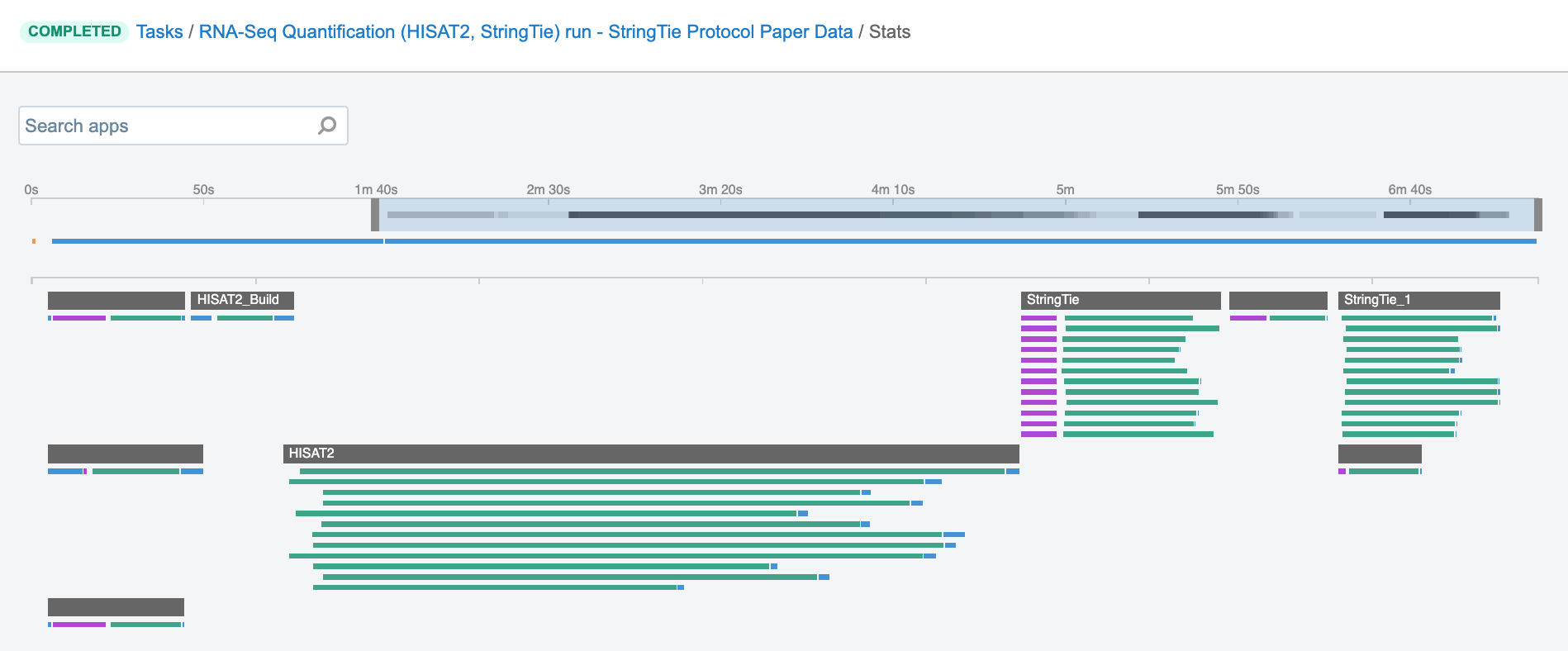

Job – this term can practically be translated to the “execution” term from the “Task” definition (see above). It represents a single run of a single app found within a workflow. If you are coming from a computer science background, you’ll notice that the definition is quite similar to a common understanding of the term “job” (wikipedia). Except that the “job” is a component of a bigger unit of work called a “task” and not the other way around, as in some other areas may be the case. To further illustrate what job means on the platform, we can use the View stats & logs panel (button in the upper right corner on the task page) where we can visually inspect jobs after the task has been executed:

The green bars under the gray ones (apps) represent the jobs (Figure 1). As you can see, some apps (e.g. HISAT2_Build) consist of only one job, whereas others (e.g. HISAT2) contain multiple jobs that are executed simultaneously.

Getting started

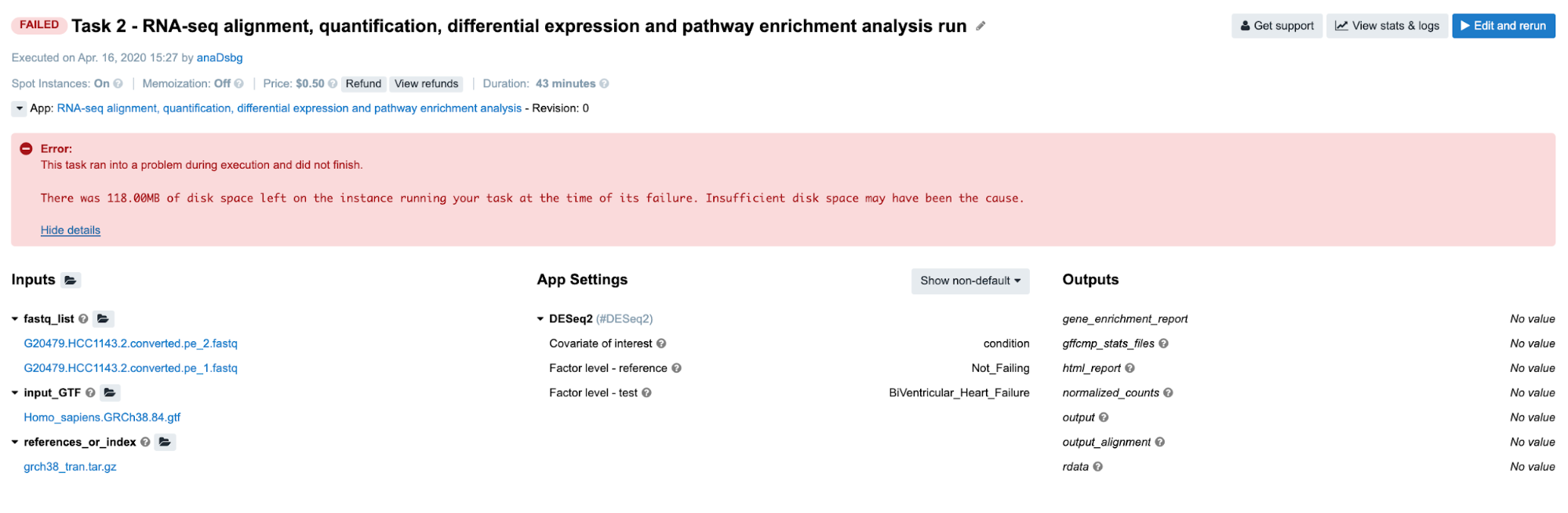

First, let us learn where to look if something does go wrong: in case the task fails, there is an error message on the task page which may help you solve the problem right away. The bioinformatician who always attempts to debug the failed task themselves will instinctively look at this error message first. There are a number of different occasions on which the platform services will catch the exact reason for failure and display it on the task page. One such use case in which the issue is apparent from the error message on the task page is the lack of disk space (Figure 3).

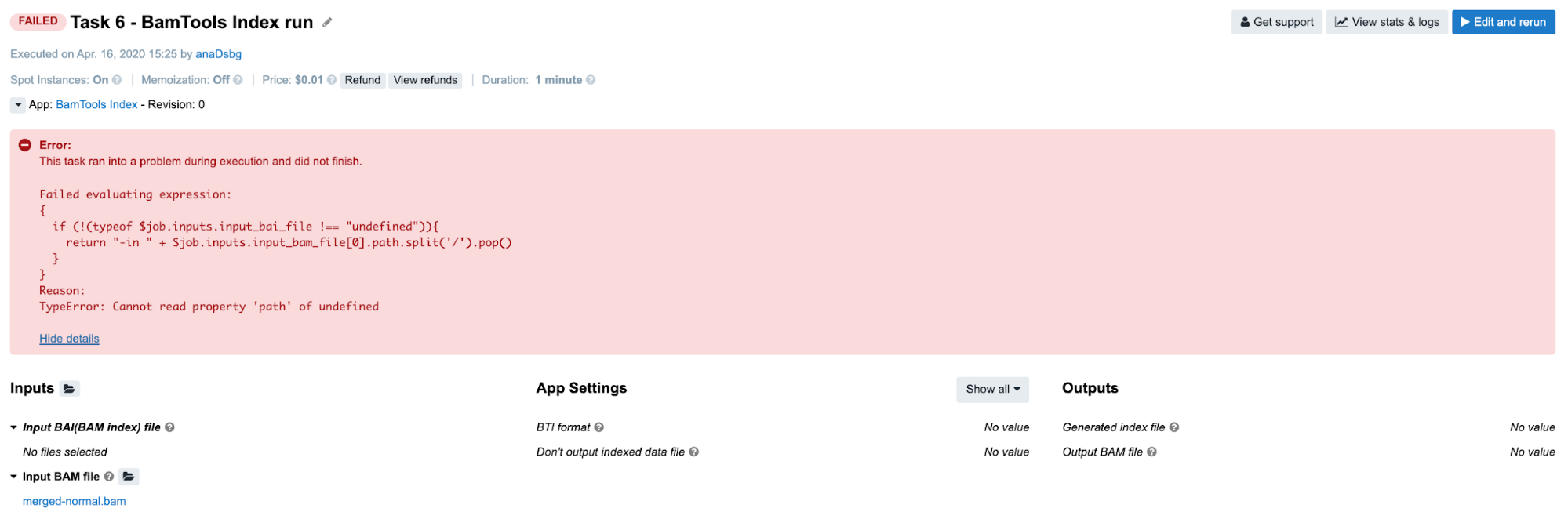

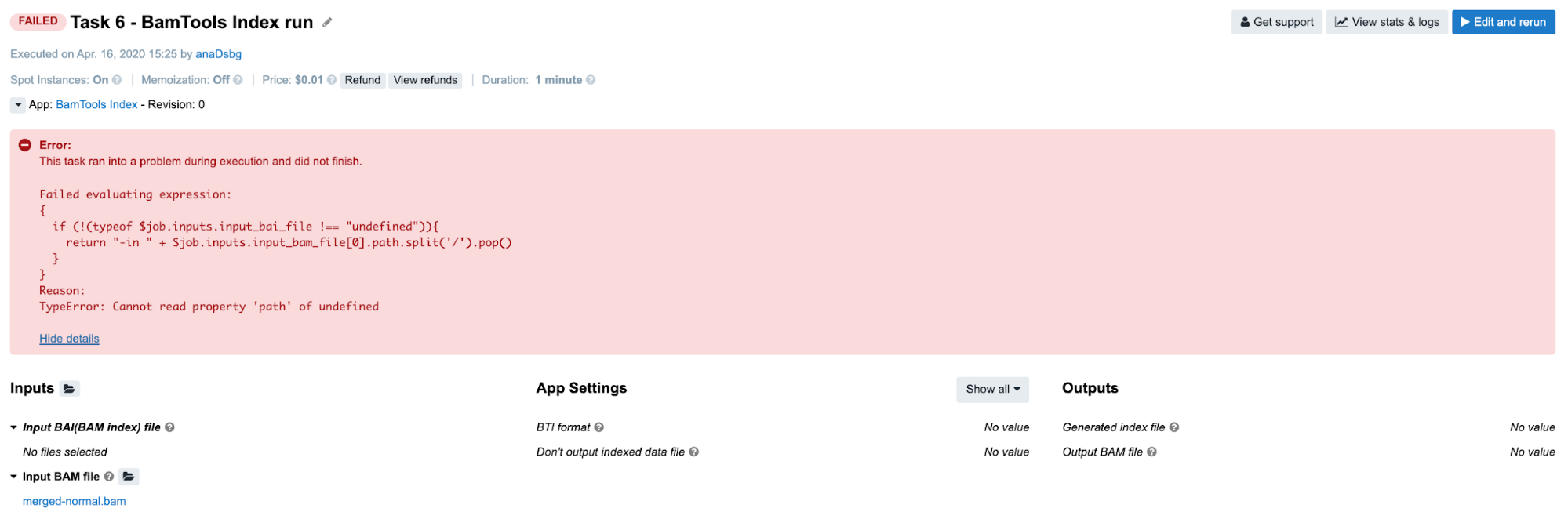

Another type of error message on the task page which is a bit less intuitive but can provide enough clues for solving the problem is JavaScript expression evaluation error (Figure 4). This one is particularly useful if the person who debugs the task is the same person who built the wrapper which throws the error. Otherwise, the person who debugs the task will only need to spend slightly more time figuring out what the expression does in the wrapper.

The easiest way to start dealing with this type of error is to go into the app’s editor mode and examine the expression that failed (if it is too much hassle to do that by observing it directly on the task page) and examine those inputs which are used by the expression and their types (in this case input_bam_file). After performing these checks, we conclude that we falsely assumed that input_bam_file input was a list of files. We tried to get the path of the first item of that list in the JavaScript expression ($job.inputs.input_bam_file[0]), which resulted in TypeError because a single file is expected on this particular input.

However, in the majority of other cases it won’t be possible to tell the reason for the failure only by looking at the error displayed on the task page (Figure 5).

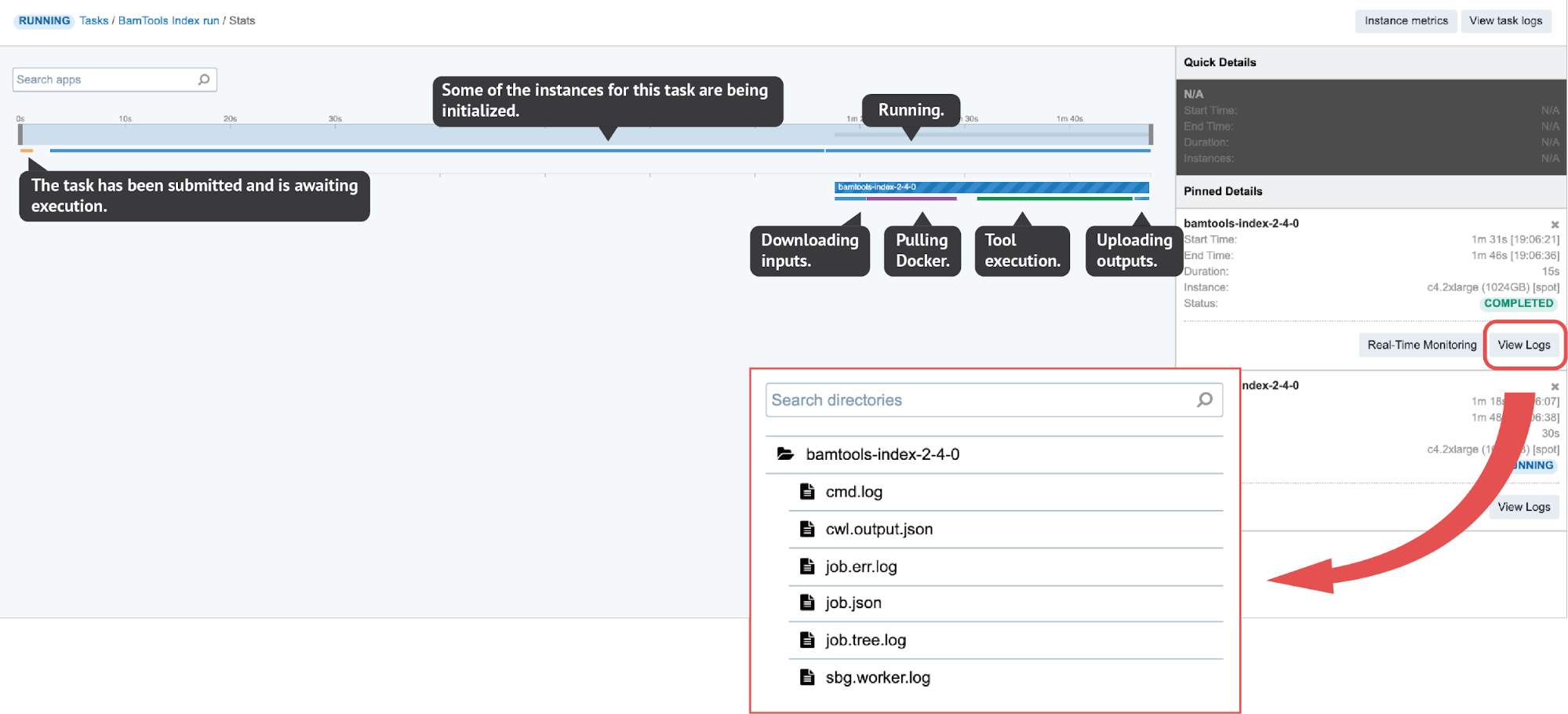

In such cases we proceed to the View stats & logs panel (see button in the upper right corner on Figure 5) which gives us all the details related to the run.

job.tree.log available only after the task is completed or failed). On the other hand, the Real-Time Monitoring button enables users to open up a panel where they can explore the contents of those files while being generated.

There are several interactive features within the View stats & logs panel that you can use to examine your run (Figure 6). However, here we will focus on illustrating the troubleshooting flow with a few examples rather than explaining those features in detail. If you want to learn more about this before getting to the examples, you can do so by checking out the documentation pages – task stats, task logs, job monitoring and instance metrics page.

As already mentioned, there are common steps that usually lead to the problem’s solution and which we tried to condense into the Troubleshooting cheat sheet. Herein, we will apply it to a couple of failed tasks.

Examples: Quick & Unambiguous

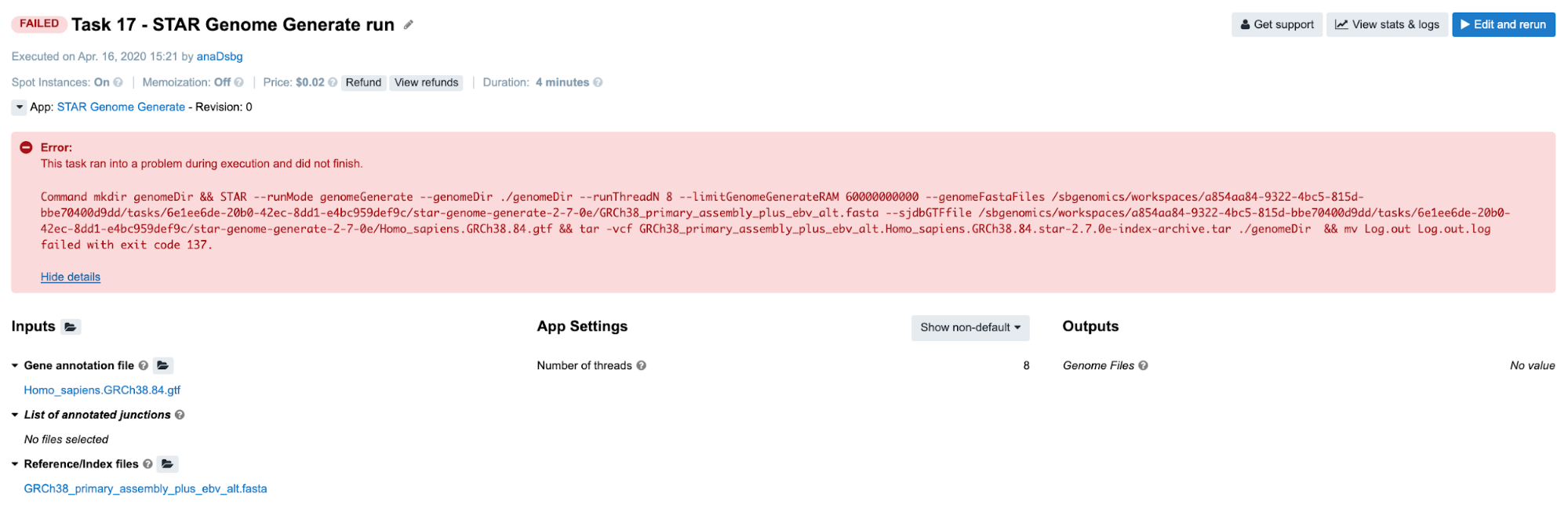

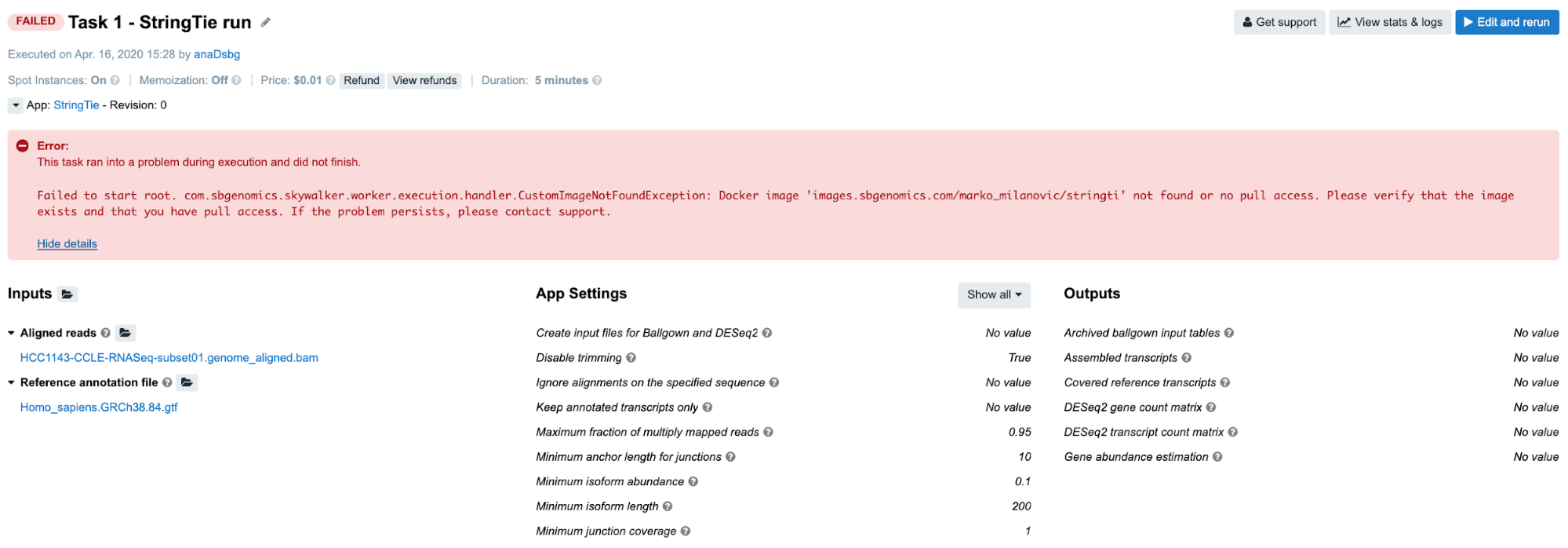

Task 1: Docker image not found

Diagnosis: As per error message on the task page, there is a problem with the docker image. If we take a closer look, there is a typo in the docker image name – instead of images.sbgenomics.com/marko_milanovic/stringti we should’ve had images.sbgenomics.com/marko_milanovic/stringtie.

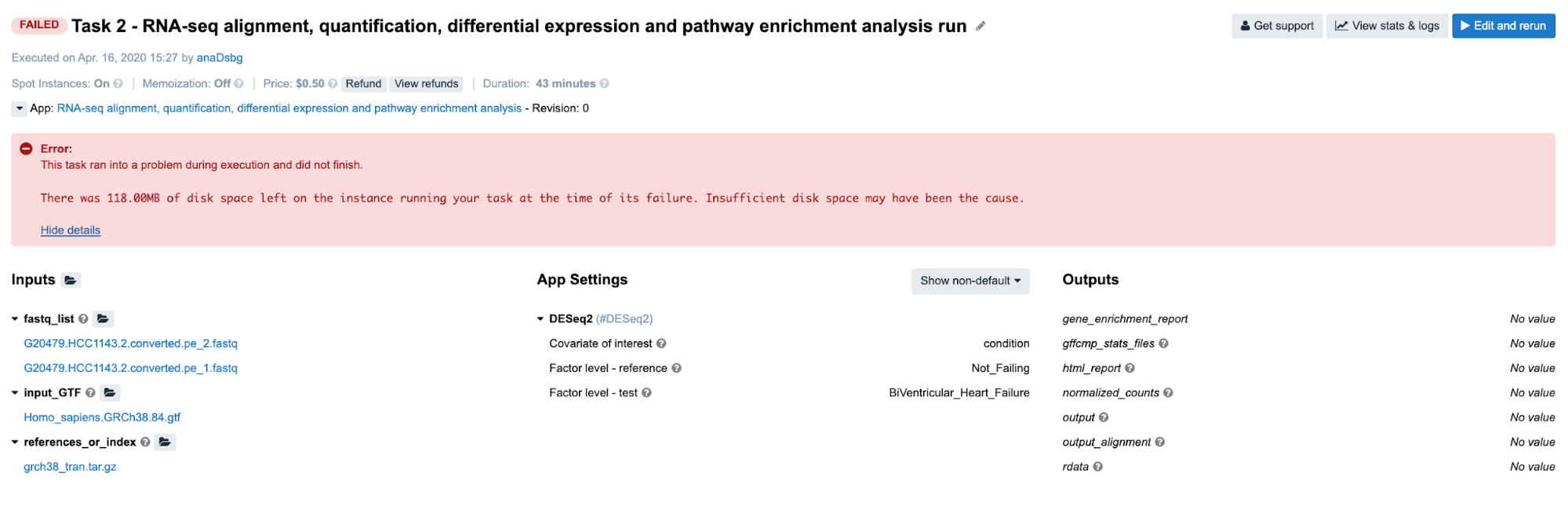

Task 2: Insufficient disk space

Diagnosis: Again, it is obvious from the error message on the task page that the task has failed due to lack of disk space.

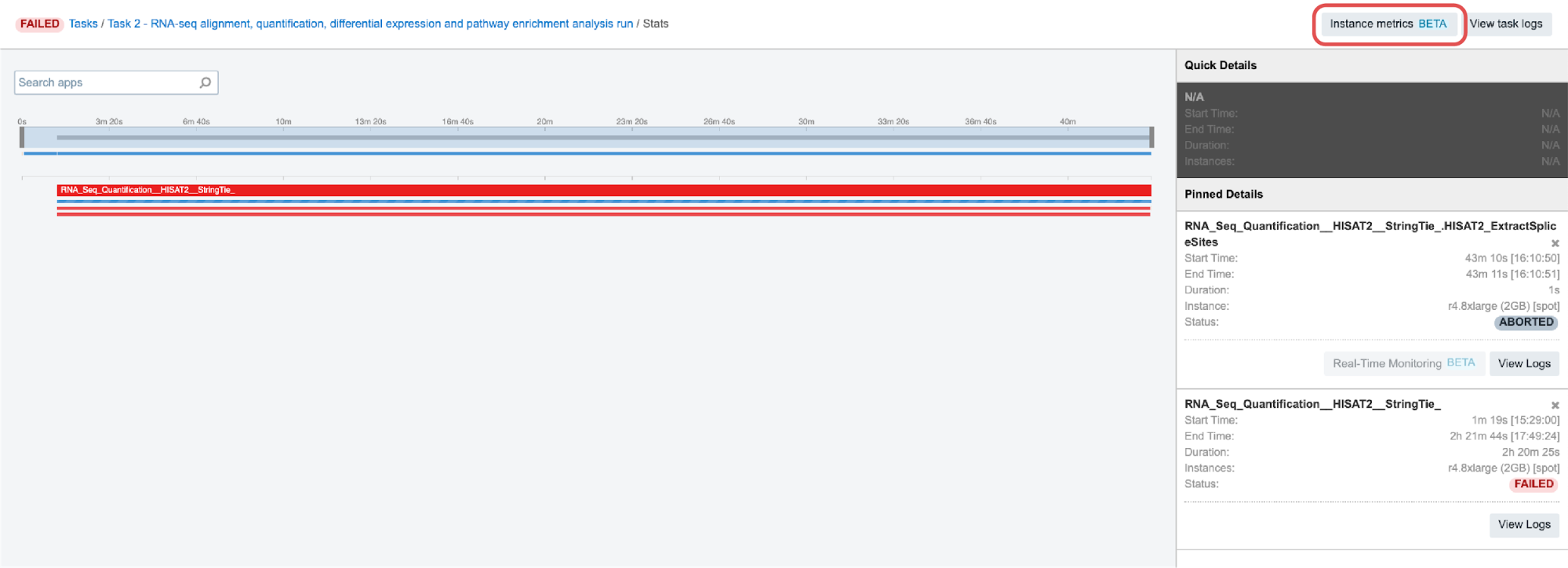

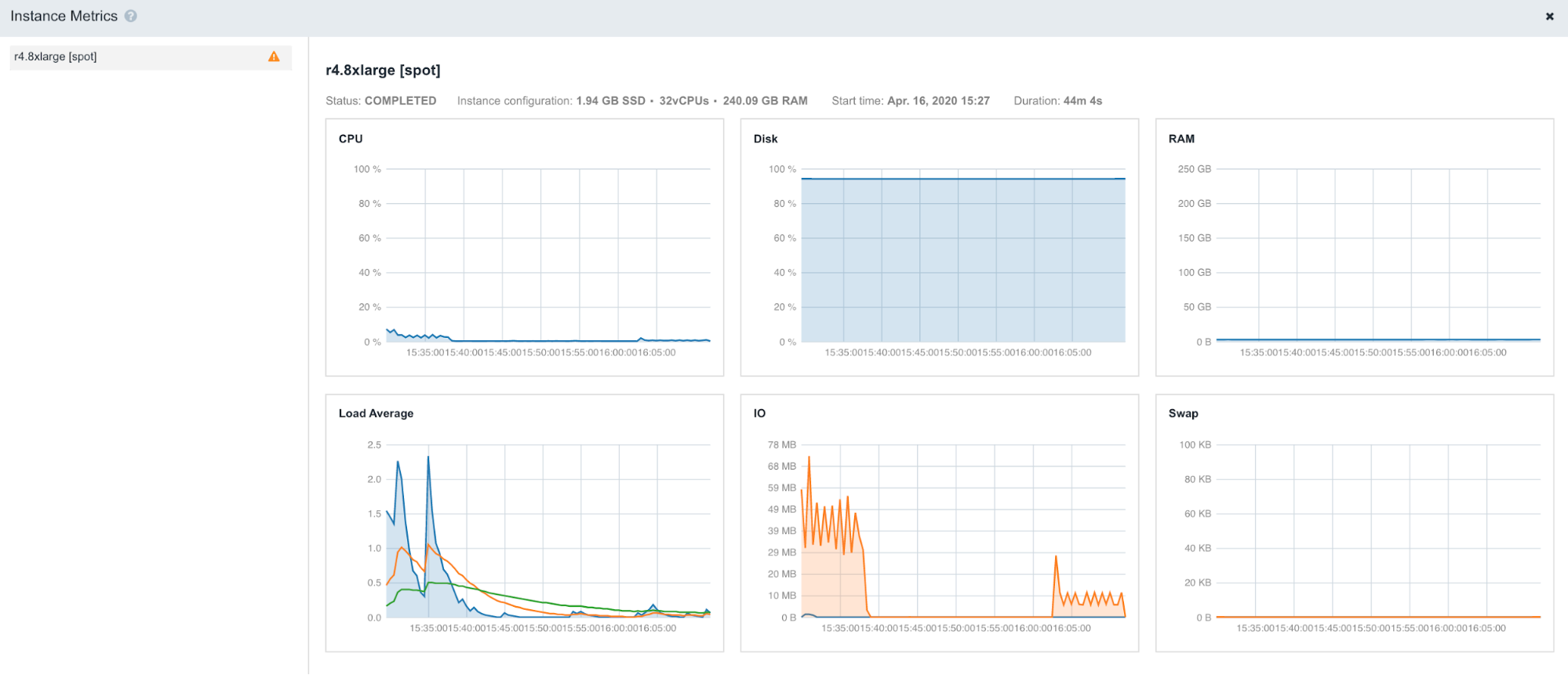

This can be also confirmed by checking out Instance metrics diagrams from the View stats & logs panel:

As we see, the line showing level of the disk space usage (second diagram from left on Figure 8) is very close to 100%:

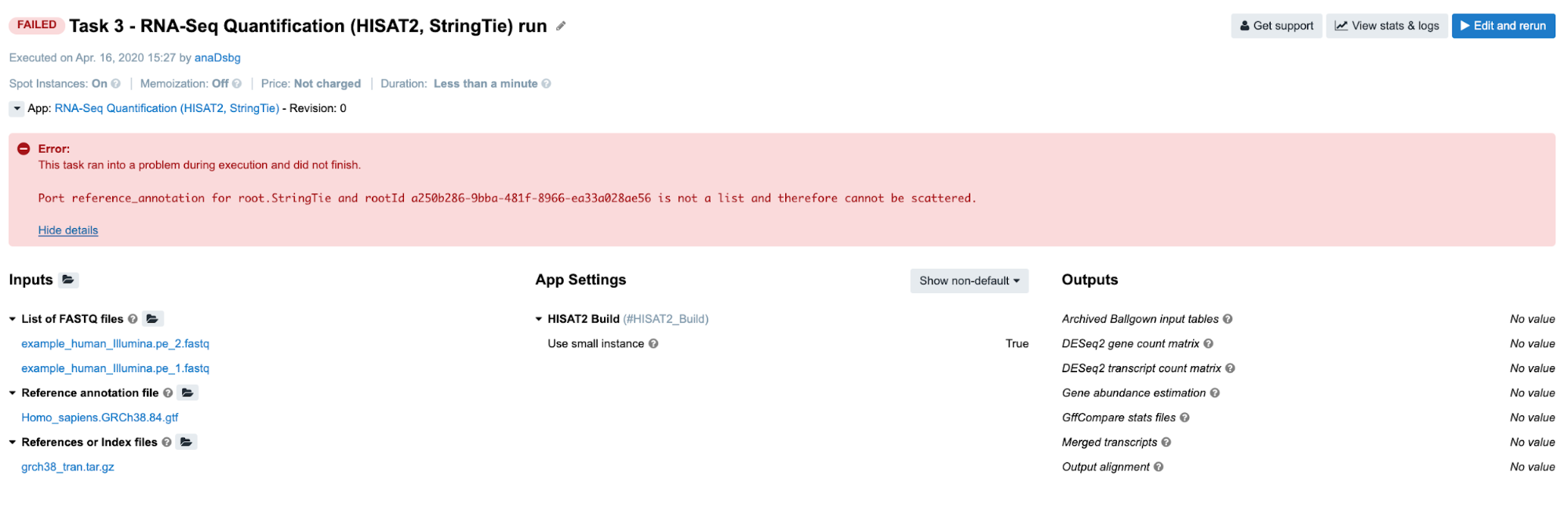

Task 3: Scatter over a non-list input

Diagnosis: Stringtie app was configured to perform scattering over the Reference annotation file input, and therefore expects an array (list) to be provided as an input. Instead of an array, a single file was provided.

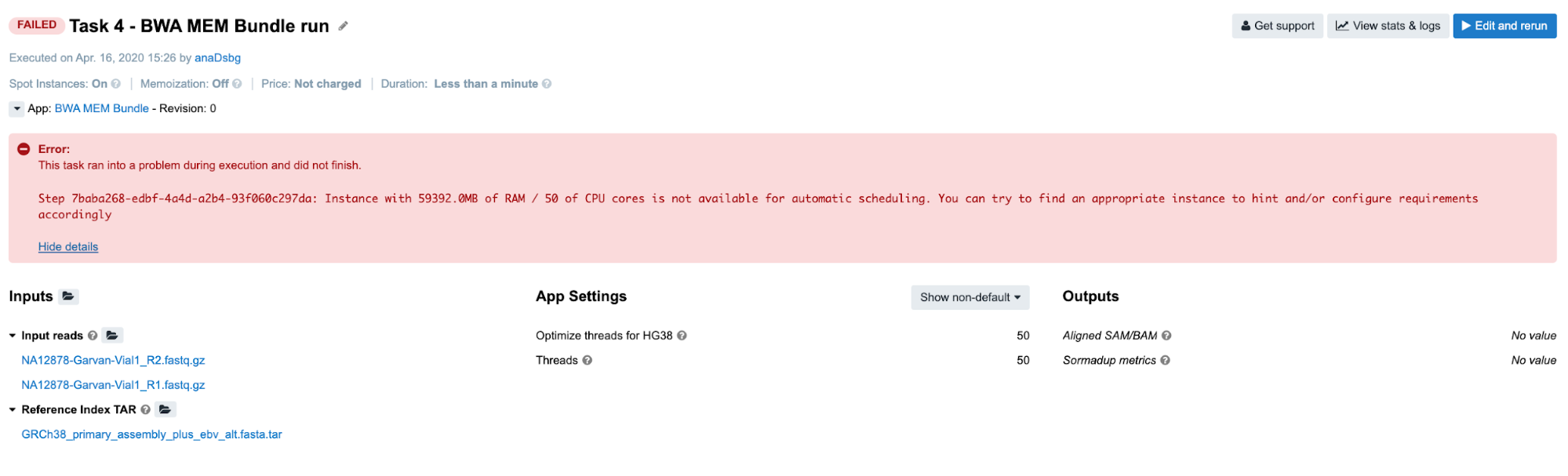

Task 4: Automatic allocation of the required instance is not possible

Diagnosis: In this example, we tried to automatically allocate an instance which will have at least 59,392 MiB of memory and 50 CPUs. To prevent unexpected outcomes due to wrong allocation requirements settings, spinning up the bigger instances is only enabled through the execution hints.

This applies to the cases in which the suitable instance type has 64 GiB of memory or more. In this example, the instance that fits both requirements (59,392 MiB of memory and 50 CPUs) is m4.16xlarge which has 64 GiB of memory and therefore cannot be allocated unless explicitly set through the execution hints.

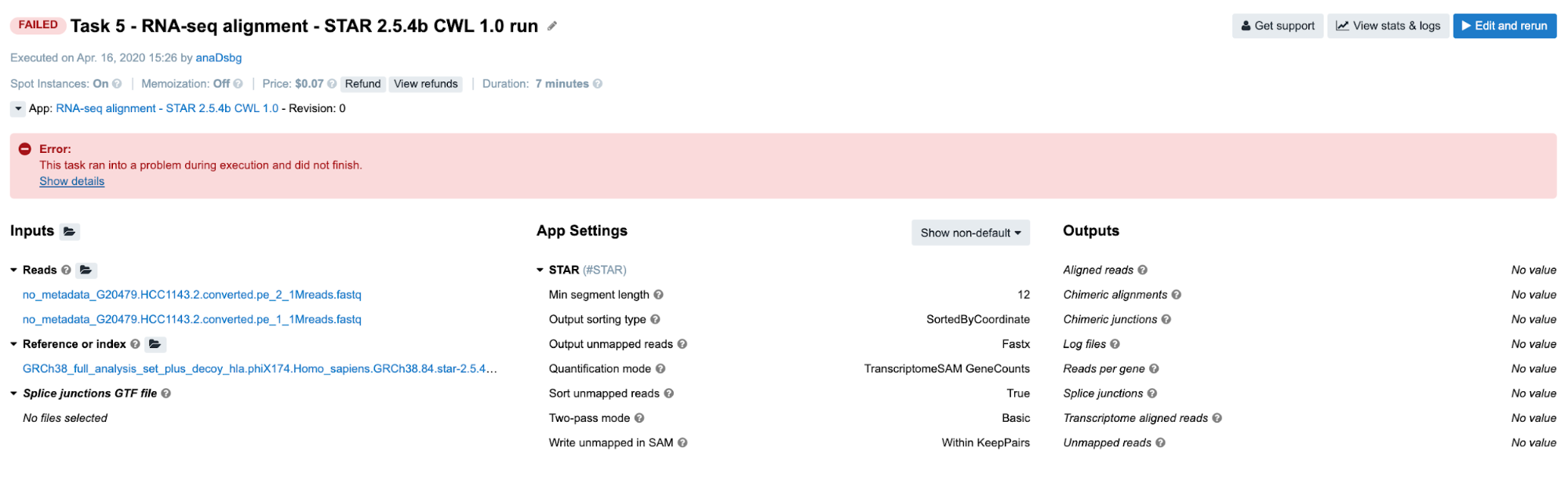

Task 5: JavaScript evaluation error due to lack of metadata

Diagnosis: If you click on Show details in the error message box, you will get the code of a long JavaScript expression with the evaluation error message in the bottom: ReferenceError: tmp is not defined. First of all, don’t let the length of the expression scare you away – this is a pretty simple JavaScript expression which we use frequently to ensure that the paired-end reads will get inserted into the command line in the right order. What we use to help us figure out which file(s) should go first is paired_end metadata. Some tools can have multiple samples analyzed at once, so we needed to include checks for sample_id metadata as well. Therefore, we ended up using information about those two on a regular basis in our apps and workflows. The variable tmp is only intended for storing some intermediary results and it is not defined here because our input files don’t contain metadata that we need (check this out by clicking on files under the Reads input).

Important Note: If you end up debugging a task which failed with JavaScript expression error, you won’t have log files for the corresponding tool, since the tool itself didn’t even start the execution. In case you are running a workflow and want to examine the files that were passed to the tool that failed, you can do so by checking out the outputs from the previous tools. All the information about outputs are contained within cwl.output.json file (Figure 6). To go through an example in which this file has been used for debugging, jump to Task 19.

Task 6: Invalid JavaScript indexing

Diagnosis: Again, we encounter a JavaScript evaluation error which is caused by invalid input indexing. See the Troubleshooting Cheat Sheet for more details.

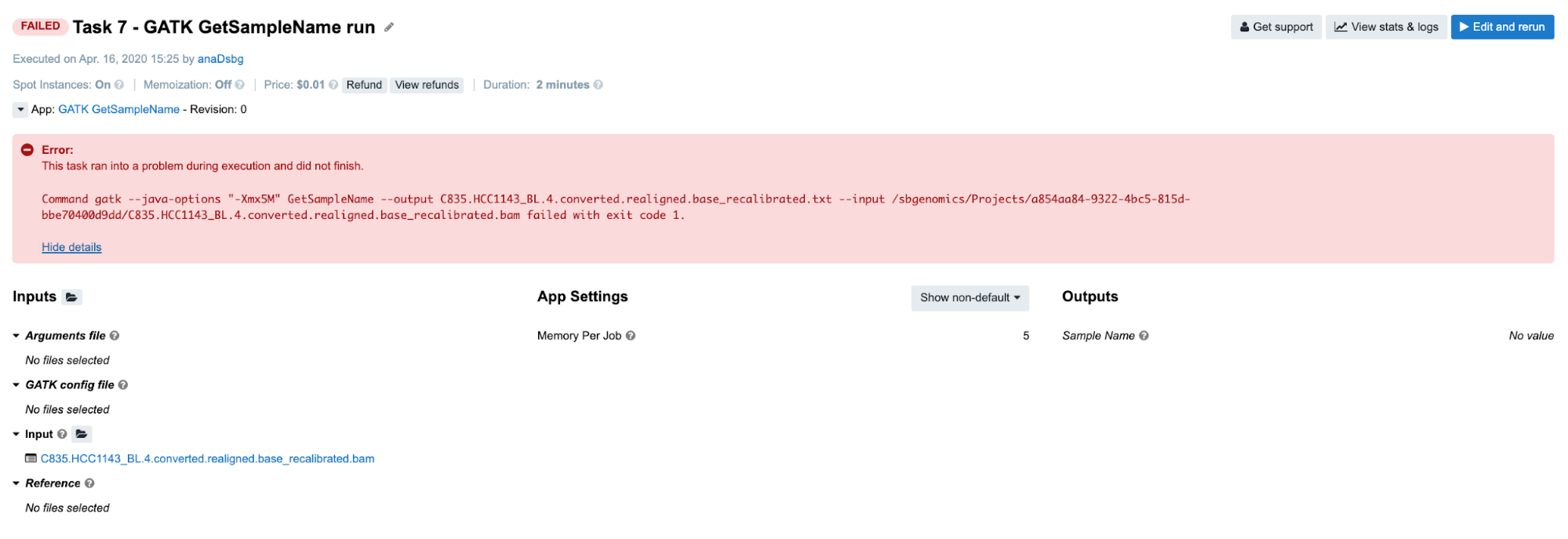

Task 7: Insufficient memory for a Java process

Diagnosis: As opposed to previous examples, in this one we can’t figure out the issue based solely on the error message on the task page. As you can see, the command which is printed out in the error message failed with exit code 1. In programming, any non-zero exit code indicates that there was an issue in a certain step within the tool, but the meaning of the exit code number can vary in different tools. Because of that, it is time to dig deeper into the task. Following the Troubleshooting Cheat Sheet (Figure 1), we end up examining the job.err.log file under View stats & logs (Figure 6) which starts with the following line:

2019-12-19T17:47:47.804011467Z Exception in thread "main" java.lang.OutOfMemoryError: GC overhead limit exceeded

Note that the memory-related exception has been indicated which leads us to the conclusion that we should increase the memory that we pass to Java processes. This means that the value of the parameter Memory Per Job is insufficient. The value of this parameter is used to determine the "-Xmx5M" Java parameter which you can also see by looking into the app’s wrapper from the editor.

Examples: File compatibility challenges

Before jumping to the next few examples, we would like to dedicate a couple of paragraphs to file compatibility issues. Although the issues presented may not be strictly specific to RNA-seq analysis only, many users encountered issues while working with RNA-seq in particular. Therefore, we will focus only on RNA-seq examples here, though it will be worthwhile to go through the following material even if you don’t deal with RNA-seq data in your research.

In contrast to many other tools, RNA-seq tools rely not only on reference genome, but also on gene annotations. Considering that there are a number of different versions and builds of both genome and gene references, pairing these together and getting exactly what you intend may sometimes pose a serious challenge.

Additionally, different tools will treat genome-gene matches differently. Some tools may complete runs successfully even though we provide incompatible pairs on the input, while others may only take chromosome names into consideration regardless of the reference versions. For instance, STAR will execute successfully when the gene annotations and genome are of different builds (GRCh37/hg19 and GRCh38/hg38), but follow the same chromosome naming convention (either “1” or “chr1”).

Since we cannot rely on tools to tell us if we have incompatible input files, the responsibility for matching the reference files correctly falls back to the end-user. Even when the user is aware of the compatibility prerequisite, there are still ways the analysis can go wrong. Hence, we will present here a few examples of RNA-seq-typical failures.

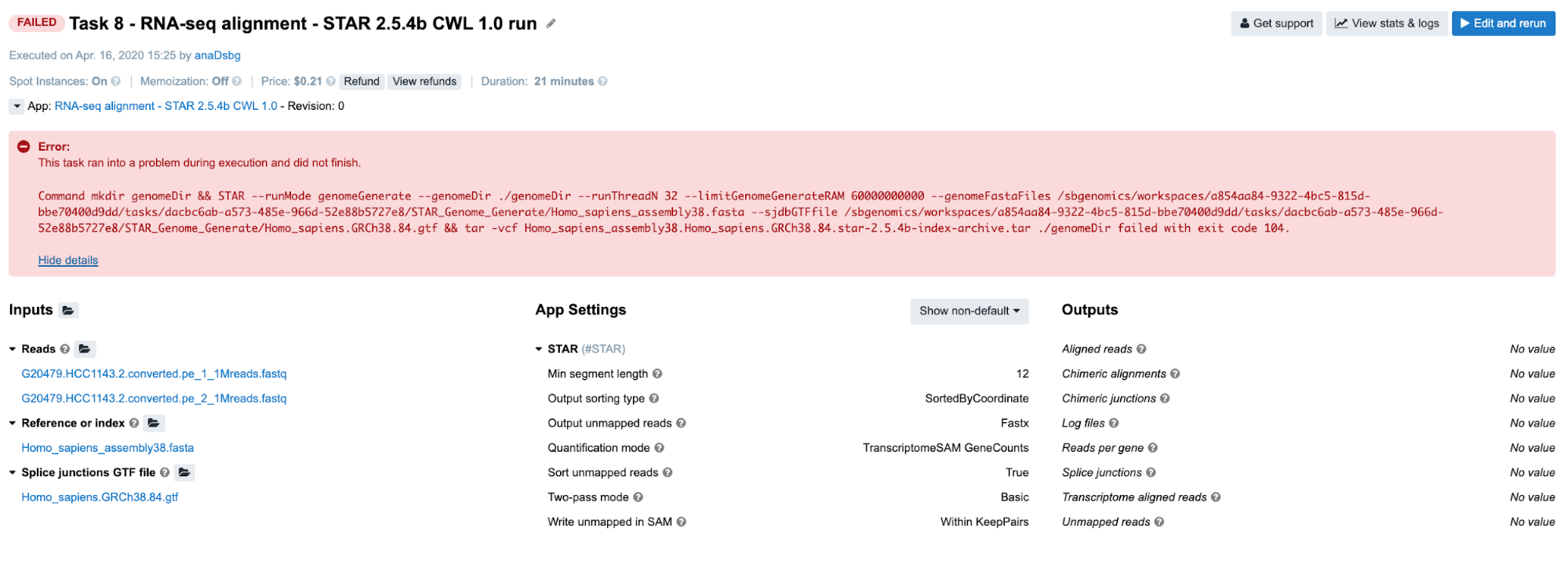

Task 8: STAR reports incompatible chromosome names

Diagnosis: If we follow the steps from the Troubleshooting Cheat Sheet, we will end up checking the job.err.log file and getting the following message:

2019-12-19T18:02:53.407928967Z

2019-12-19T18:02:53.407967204Z Fatal INPUT FILE error, no valid exon lines in the GTF file: /sbgenomics/workspaces/0500a838-8897-4f04-ba14-8bd62f75bb5f/tasks/77ff5945-b151-4e6b-b326-8a64aca09157/STAR_Genome_Generate/Homo_sapiens.GRCh38.84.gtf

2019-12-19T18:02:53.407989257Z Solution: check the formatting of the GTF file. Most likely cause is the difference in chromosome naming between GTF and FASTA file.

2019-12-19T18:02:53.407998064Z

2019-12-19T18:02:53.408006077Z Dec 19 18:02:53 ...... FATAL ERROR, exitingIndeed, STAR reports that there is a difference in chromosome naming: reference genome contains “chr1”, whereas gene annotation file has only “1” for chromosome 1.

Another path for troubleshooting is reading through the Common Issues and Important Notes section in the workflow descriptions. We strongly encourage everyone to go through the app/workflow descriptions before running the tasks.

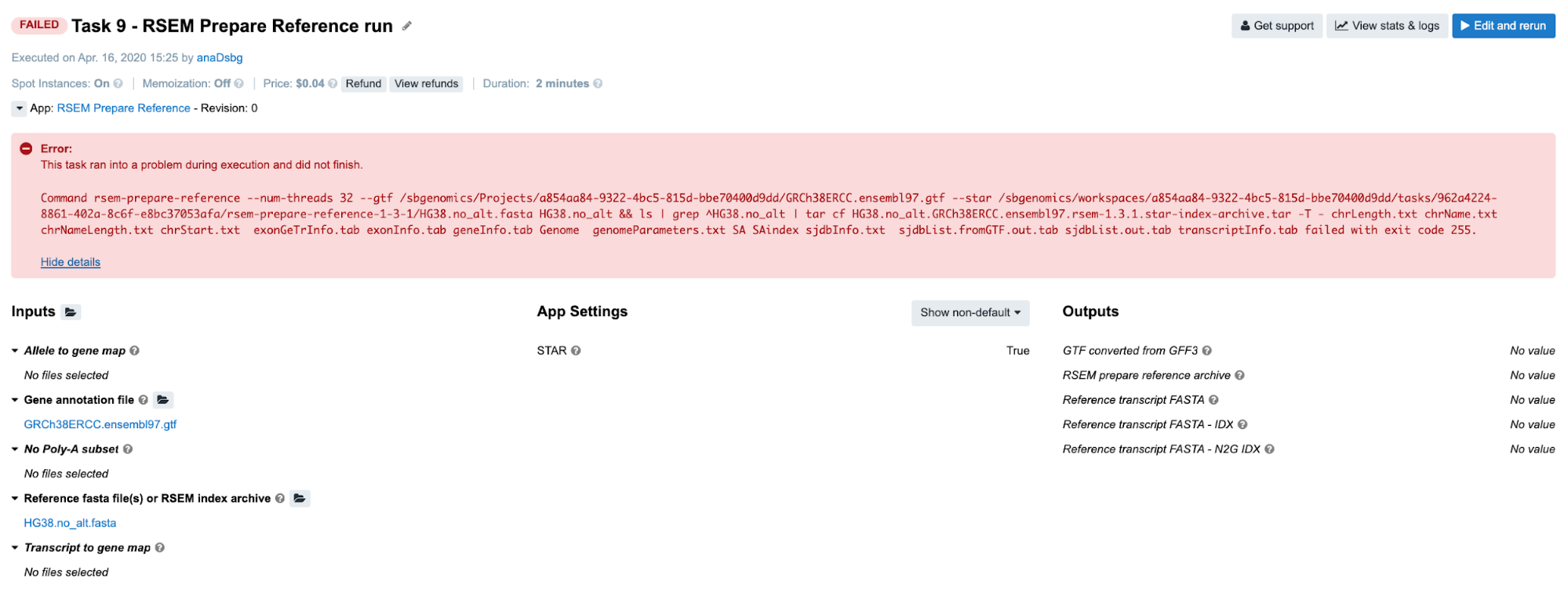

Task 9: RSEM reports incompatible chromosome names

Diagnosis: Again, we examine the job.err.log file content and get some clues from the last rows:

...

2019-12-19T17:44:01.978193140Z Warning: 226998 transcripts are failed to extract because their chromosome sequences are absent.

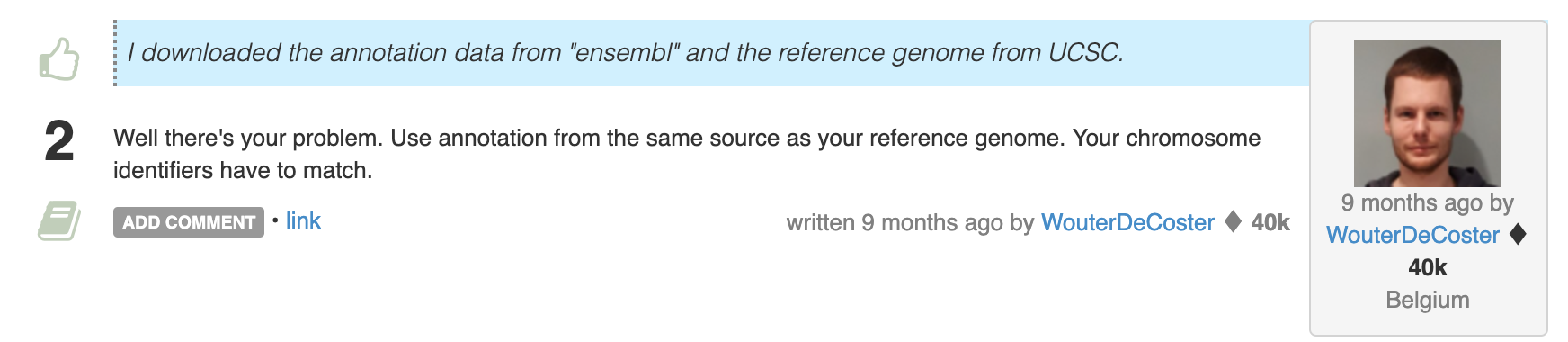

2019-12-19T17:44:02.149201498Z The reference contains no transcripts!Here, the message is not so specific as in the case of STAR’s report. However, it looks like that we have something to begin with and perhaps someone else has experienced the same issue. If we google the message from the last row, this is one of the first answers that we get:

Sounds like a slightly curt answer, but that is because someone finally had enough of people mixing apples and oranges when it comes to matching the genes to genomes.

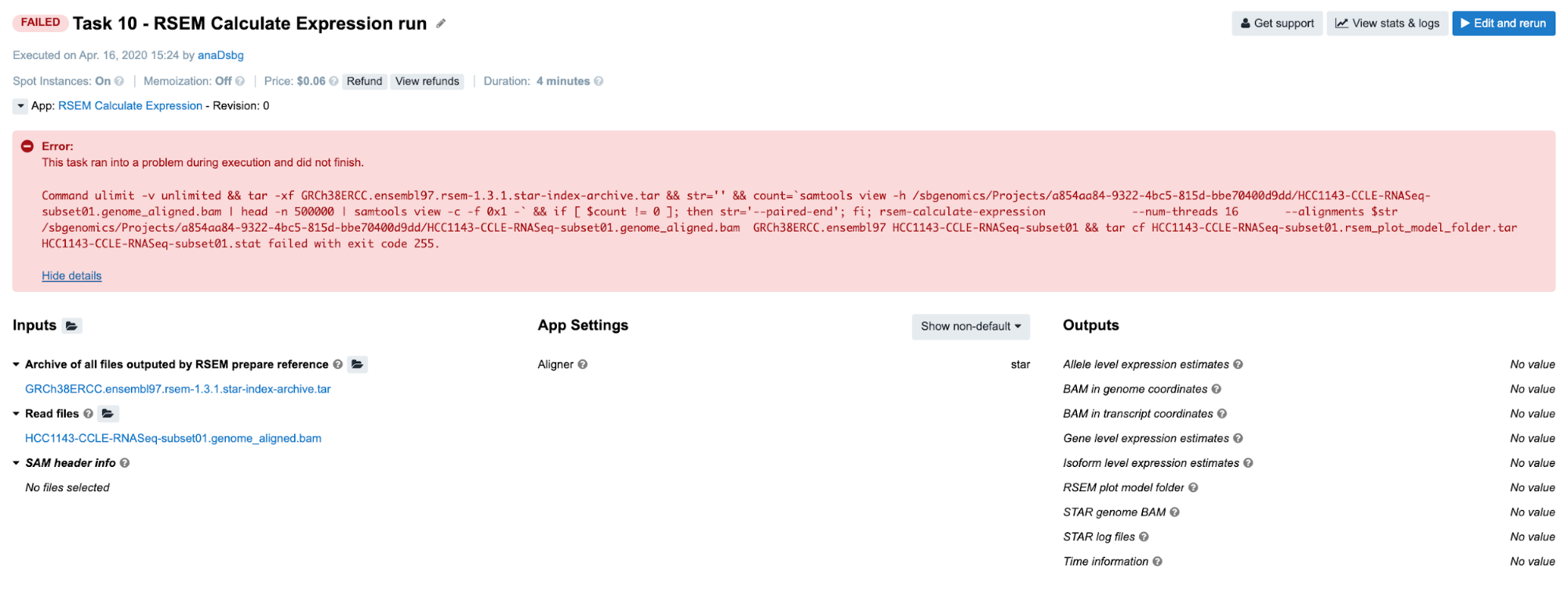

Task 10: Incompatible alignment coordinates

Another very common issue that you may come across is that quantification tools will expect the alignments on transcriptome coordinates. When you are new to bioinformatics, this may not be an obvious consideration point, so you may end up going one step back (to alignment) in order to fix this. The following is an example of RSEM task configuration which leads to an error:

Diagnosis: As you can see, the BAM provided on the input contains “genome_aligned” in its name which tells us that the coordinates are genome coordinates. If we are not aware of this, we may go check the log files first. Again, the following is the content of the job.err.log file:

2019-12-19T17:48:09.610524957Z Warning: The SAM/BAM file declares less reference sequences (287) than RSEM knows (226998)! Please make sure that you aligned your reads against transcript sequences instead of genome.

2019-12-19T17:48:09.857743405Z RSEM can not recognize reference sequence name 1!

And that gives us the needed answer. Note that in case of STAR aligner, the user does not need to provide transcriptome reference explicitly. Setting the parameter for choosing the alignment coordinates (--quantMode or Quantification mode on the platform) solves the problem in a simple way.

Examples: When error messages are not enough

Up to this point, we have been trying to solve the problems only by looking at the error messages on the task page and in the job.err.log file. However, there are few additional resources that may be informative and help you figure out what went wrong.

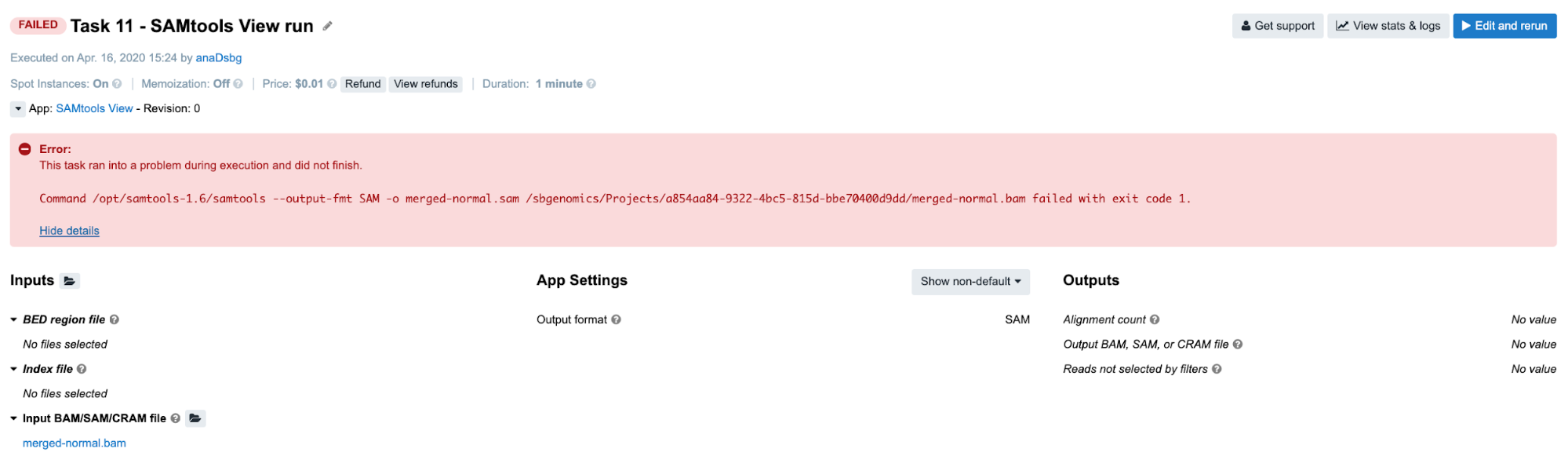

Task 11: Invalid command line

Diagnosis: Following the instructions from the Troubleshooting Cheat Sheet, we will go through the following steps:

-

Error message on the task page is not informative, so we proceed to the

job.err.logfile. -

Content of the

job.err.logfile:2019-12-19T17:45:00.036859241Z [main] unrecognized command '--output-fmt'The error message is quite specific since it refers to the particular samtools argument. However, we still do not know what happened and we decide it is best if we check out the command line itself. By doing that we can see how exactly

--output-fmtgot incorporated into the command line. -

Content of the

cmd.logfile:/opt/samtools-1.6/samtools --output-fmt SAM -o merged-normal.sam /sbgenomics/Projects/0500a838-8897-4f04-ba14-8bd62f75bb5f/merged-normal.bamAs we can see, the argument

--output-fmtis present in the command line, it is included without any typos and has a valid value which isSAM. However, we notice that we lack a samtools subcommand which isviewin this case. Now, because]--output-fmtargument happens to be placed where subcommandviewis expected, samtools will report it as an unrecognized command since it is neither of the existing subcommands (view,sort,indexetc).In general, invalid command lines tend to produce misleading error messages. Something gets omitted and something else ends up in its place, and usually that “something else” finds its way to the error message as the reason for failing even though the omitted part is the true cause of the failure.

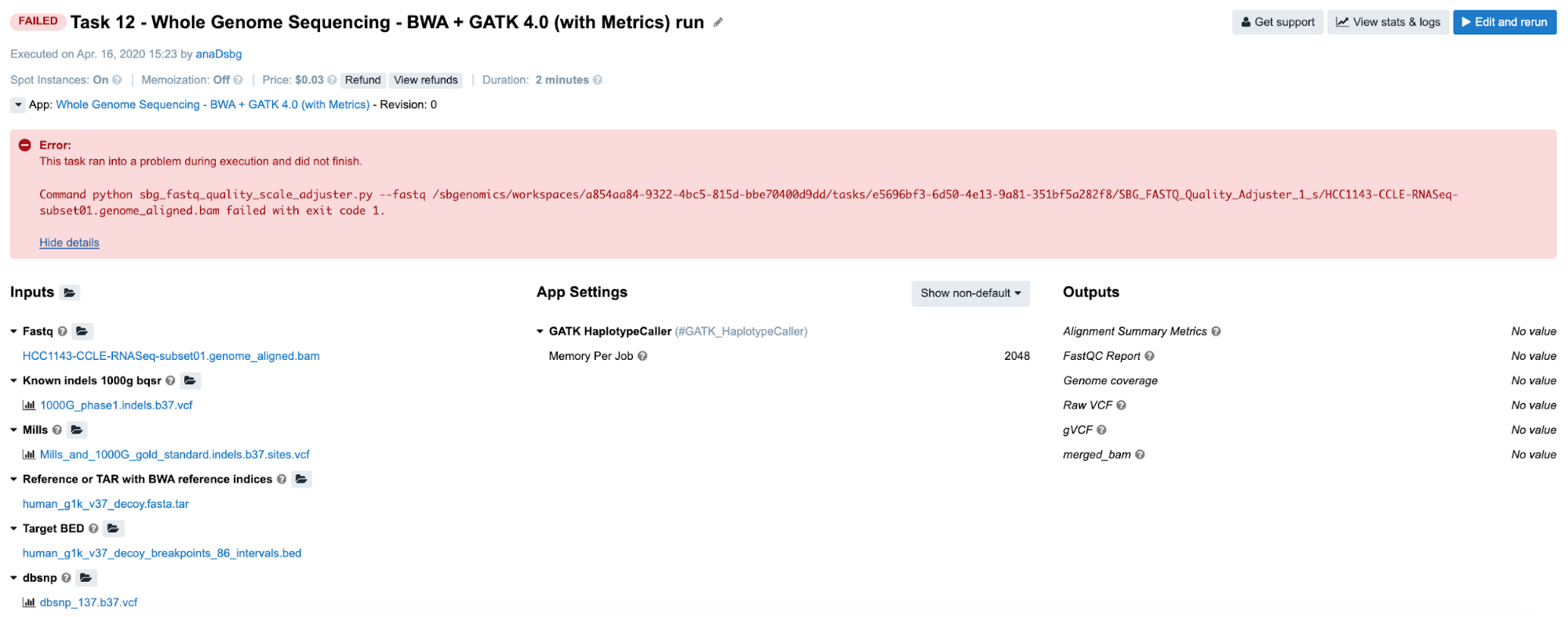

Task 12: Invalid input file format

Diagnosis:

-

Looking at the error message on the task page gives no clues to the failure cause, so we aim for the

job.err.logfile of the app that failed. -

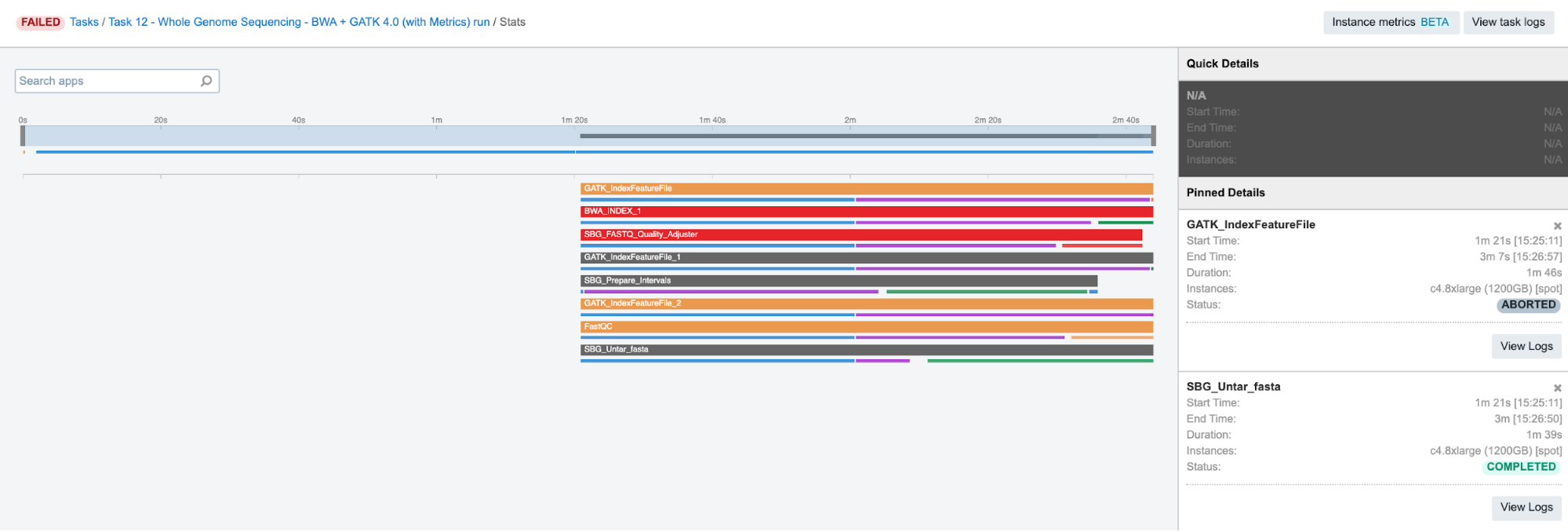

Continuing to the View stats & logs page where we find multiple aborted jobs and two failed ones (this can be different in different runs of the same task, e.g. only one job has failed on SBPLA public project):

Figure 9. Task 12 stats and logs panel.

Figure 9. Task 12 stats and logs panel. -

Content of the

job.err.logfile:... 2019-12-19T17:45:18.132888414Z Exception: Quality scale for range (0, 255) not found.This is not so helpful, but it looks like we are providing the data which is out of the allowed/expected range. Hence, the next question that comes to mind is: “What data am I providing?”

-

This brings us to the next step – checking the inputs for this particular app. Luckily, there’s only one – the input named Fastq to which we have passed a BAM file. And that is the answer – apparently, the app does not work with BAMs, which we can also double check by inspecting the app description.

Considering that the SBG FASTQ Quality Adjuster was reported in the error message on the task page, we click on it and open up the corresponding log files.

Task 13: Invalid input file format

Diagnosis:

-

The first error message indicates that the tool has failed, so we have to look elsewhere.

-

On the View stats & logs panel, we select the GATK_IndexFeatureFile_2 job and go to logs. Next, we open up

job.err.logfile where we spot the error among other lines:... 2019-12-19T17:45:31.737283894Z *********************************************************************** 2019-12-19T17:45:31.737294139Z 2019-12-19T17:45:31.737466005Z A USER ERROR has occurred: Cannot read file:///sbgenomics/workspaces/0500a838-8897-4f04-ba14-8bd62f75bb5f/tasks/41629e29-ee79-4920-8247-d529d0513cee/GATK_IndexFeatureFile_2/merged-normal.bam because no suitable codecs found 2019-12-19T17:45:31.737496822Z 2019-12-19T17:45:31.737504059Z *********************************************************************** ... -

This again looks like a problem with the input file. If we check out the descriptions of the GATK IndexFeatureFile app, we can see that it only works with VCF and BED formats.

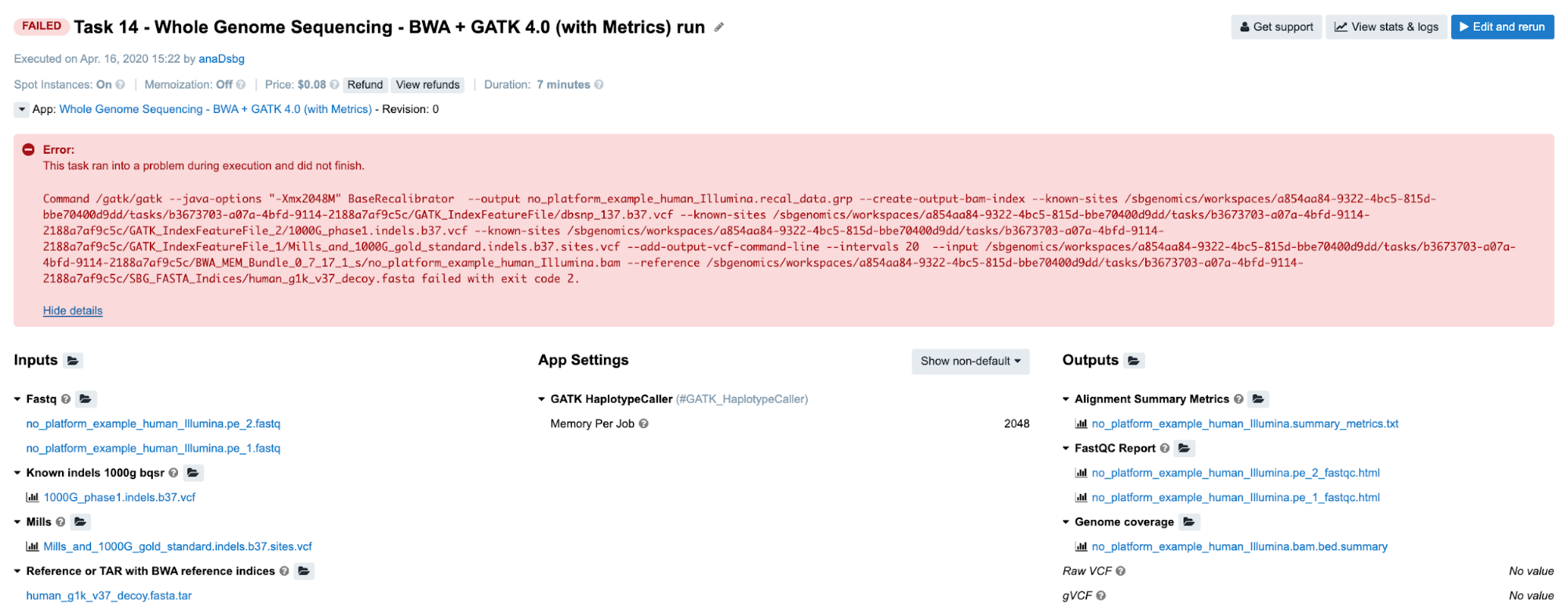

Task 14: Missing required metadata

Diagnosis:

-

Obviously, we need to try to find answers by checking out the logs.

-

Again, we inspect

job.err.logfile of the GATK_BaseRecalibrator job and detect a user error:... 2019-12-19T17:48:59.599450389Z *********************************************************************** 2019-12-19T17:48:59.599460506Z 2019-12-19T17:48:59.599469582Z A USER ERROR has occurred: Read SRR316957.3598317 20:29623037-29623112 is malformed: The input .bam file contains reads with no platform information. First observed at read with name = SRR316957.3598317 2019-12-19T17:48:59.599479224Z 2019-12-19T17:48:59.599487229Z *********************************************************************** ... -

Now, we have enough reasons to suspect input file format or validity. However, we can quickly eliminate the potential issues with the format since the app accepts BAMs. So the question is: “What else can I check before digging into the problematic file itself?” What SB bioinformaticians tend to do is to try all of the cheap checks before examining the content of the inputs. It seems like everything is good with how the command line is built since the tool started analyzing the content of the file, but we can also check the

cmd.logfile to confirm this. Afterwards, there is nothing else worth checking in the log files, so the last quick resource is the Common Issues and Important Notes section from the workflow descriptions. Fortunately, we find exactly what we need there: the input lacks the required Platform metadata.

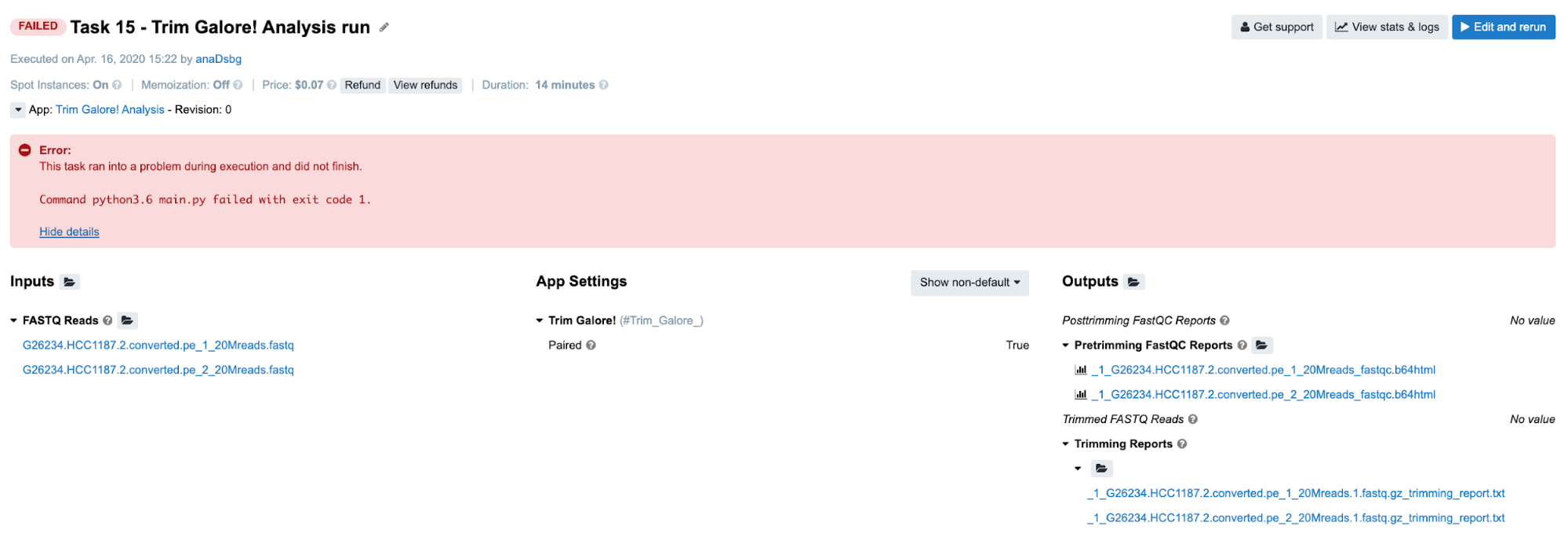

Task 15: Incompatible input/output types

Diagnosis:

-

No clues on the task page, so we look further.

-

From the View stats & logs panel we observe that SBG_FASTQ_Merge job has failed and we examine its

job.err.logfile:2019-12-19T17:54:31.747133958Z Traceback (most recent call last): 2019-12-19T17:54:31.747171107Z File "main.py", line 36, in 2019-12-19T17:54:31.747176056Z main() 2019-12-19T17:54:31.747178937Z File "main.py", line 23, in main 2019-12-19T17:54:31.747181776Z sort_by_metadata_key='file_segment_number') 2019-12-19T17:54:31.747184526Z File "/usr/lib/python3.6/CWL.py", line 207, in group_by 2019-12-19T17:54:31.747187528Z inputs=deepcopy(self._inputs)[input_key]) 2019-12-19T17:54:31.747190315Z File "/usr/lib/python3.6/CWL.py", line 193, in group_by_metadata_key 2019-12-19T17:54:31.747194228Z for key, val in self.full_group_by(inputs, key=lambda x: x[METADATA_KEY][metadata_key] 2019-12-19T17:54:31.747197073Z File "/usr/lib/python3.6/CWL.py", line 179, in full_group_by 2019-12-19T17:54:31.747200068Z k = key(item) 2019-12-19T17:54:31.747202766Z File "/usr/lib/python3.6/CWL.py", line 194, in 2019-12-19T17:54:31.747205639Z if metadata_key in x[METADATA_KEY] 2019-12-19T17:54:31.747208359Z TypeError: list indices must be integers or slices, not str -

After seeing this you may think “Am I supposed to understand the underlying Python code to solve this?” The answer is no. However, you need to understand that the input you have provided to this app caused its failure. So, the next logical step is to check what exactly you provided to this particular app. The easiest way to do this is to inspect the

job.jsonfile which contains complete configurations for this job, i.e. parameters, resources and inputs. The string that you should search for in this file is"inputs" : {. It’s always the last key in this log file and it contains information about all inputs for the corresponding job. This is how it looks like in this case:... "inputs" : { "fastq" : [ [ { "checksum" : "sha1$c65d0cb714193ebe4badca68ec0fb37f39926b71", "class" : "File", "contents" : null, "dirname" : "/sbgenomics/workspaces/0500a838-8897-4f04-ba14-8bd62f75bb5f/tasks/c7d7b7cc-65d8-48a9-94e4-c1a507dabe04/Trim_Galore__1_s", "location" : "/sbgenomics/workspaces/0500a838-8897-4f04-ba14-8bd62f75bb5f/tasks/c7d7b7cc-65d8-48a9-94e4-c1a507dabe04/SBG_FASTQ_Merge/G26234.HCC1187.2.converted.pe_1_20Mreads.1_val_1.fq.gz", "metadata" : { "case_id" : "CCLE-HCC1187", "experimental_strategy" : "RNA-Seq", "file_segment_number" : 1, "investigation" : "CCLE-BRCA", "paired_end" : "1", "platform" : "Illumina", "reference_genome" : "HG19_Broad_variant", "sample_id" : "HCC1187_20M", "sample_type" : "Cell Line", "sbg_public_files_category" : "test" }, "name" : "G26234.HCC1187.2.converted.pe_1_20Mreads.1_val_1.fq.gz", "originalPath" : "/mnt/nosbgfs/workspaces/0500a838-8897-4f04-ba14-8bd62f75bb5f/tasks/c7d7b7cc-65d8-48a9-94e4-c1a507dabe04/Trim_Galore__1_s/G26234.HCC1187.2.converted.pe_1_20Mreads.1_val_1.fq.gz", "path" : "/sbgenomics/workspaces/0500a838-8897-4f04-ba14-8bd62f75bb5f/tasks/c7d7b7cc-65d8-48a9-94e4-c1a507dabe04/SBG_FASTQ_Merge/G26234.HCC1187.2.converted.pe_1_20Mreads.1_val_1.fq.gz", "size" : 155469308 }, { ...The input of interest is

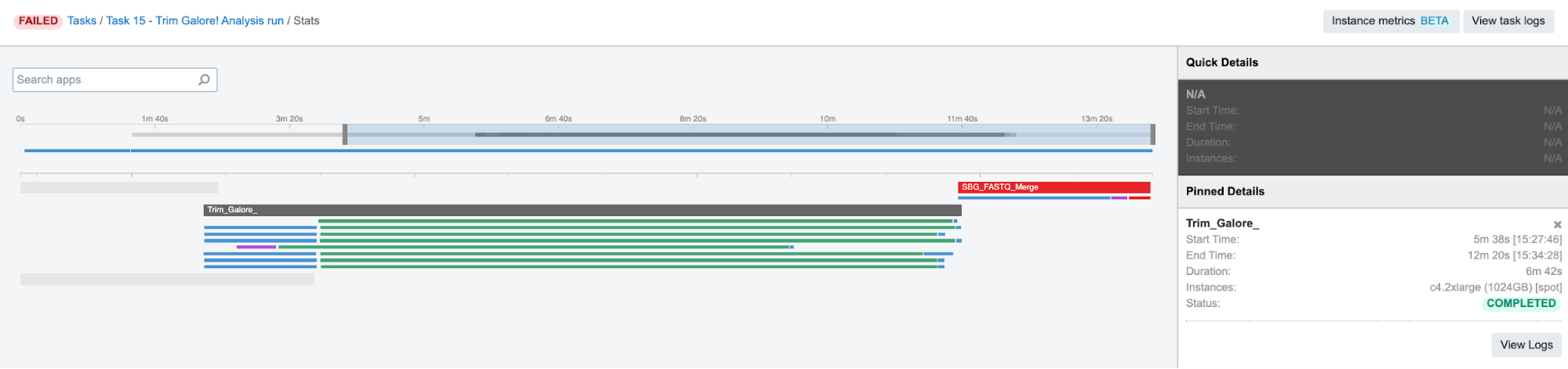

"fastq"and it begins with[ [meaning that this input is a list of lists. Because SBG_FASTQ_Merge is built to take only a single list of files as input, we may conclude that this is the reason for the task failure.But let us elaborate on this a bit more. The tool which precedes this one – Trim Galore! – is scattered and it produces a list of two trimmed FASTQ files per each of 8 jobs (green bar below Trim_Galore_ bar):

Figure 10. Trim Galore! parallel jobs.

Figure 10. Trim Galore! parallel jobs.Therefore, when all the lists of two files are created, they are passed to the next app as a list of lists. In cases like this, SBG Flatten or SBG FlattenLists apps can be added in between to convert any kind of nested lists into a single list.

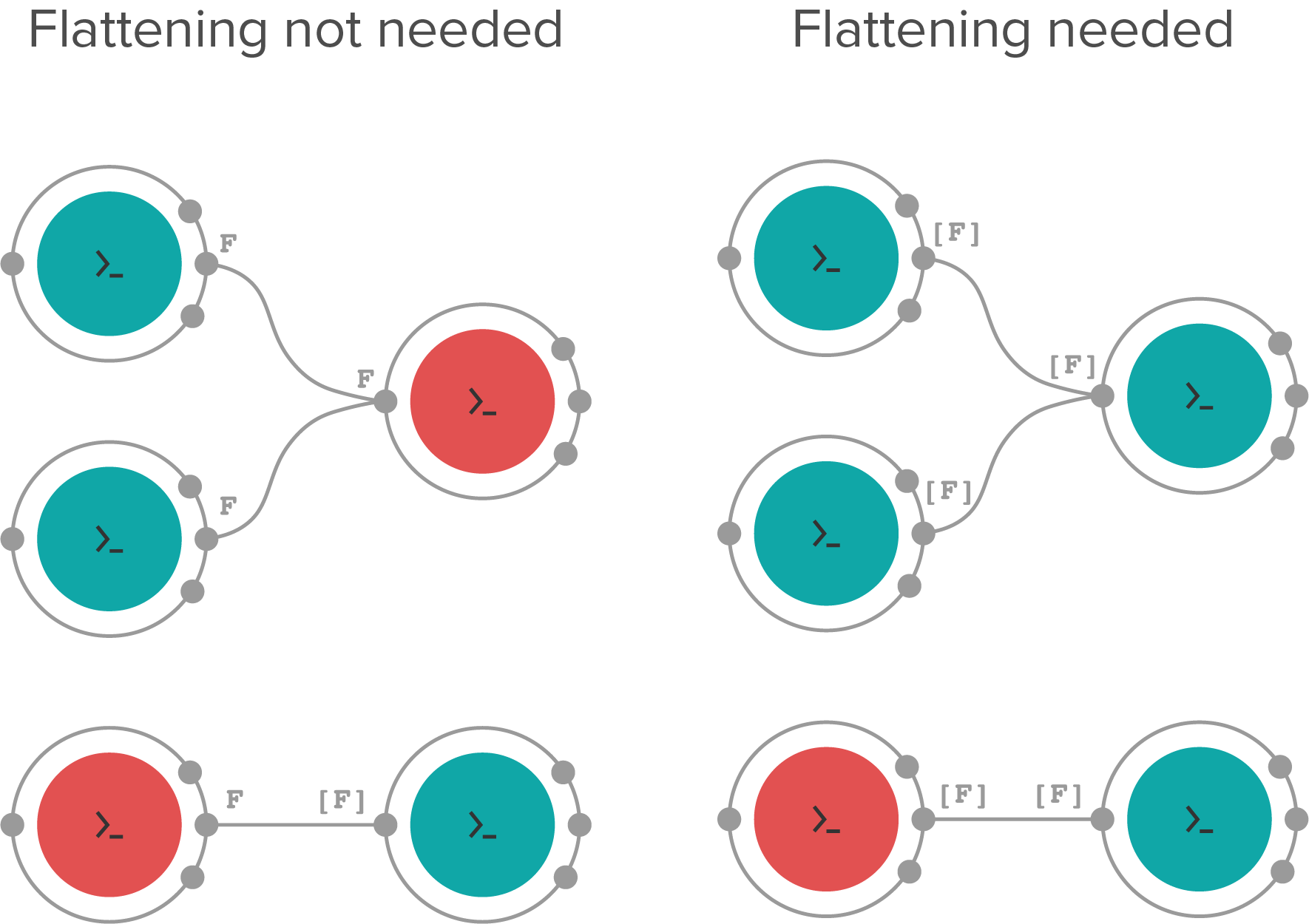

The following is a diagram showing example cases when flattening is needed and when it is not needed.

Figure 11. Different cases when flattening is needed and cases when it is not needed. Red nodes denote the apps in which scattering is used. Inputs/outputs types are denoted as F and [F], meaning single file and a list of files respectively. Note that the bottom right example matches the scenario in this task.

Figure 11. Different cases when flattening is needed and cases when it is not needed. Red nodes denote the apps in which scattering is used. Inputs/outputs types are denoted as F and [F], meaning single file and a list of files respectively. Note that the bottom right example matches the scenario in this task.

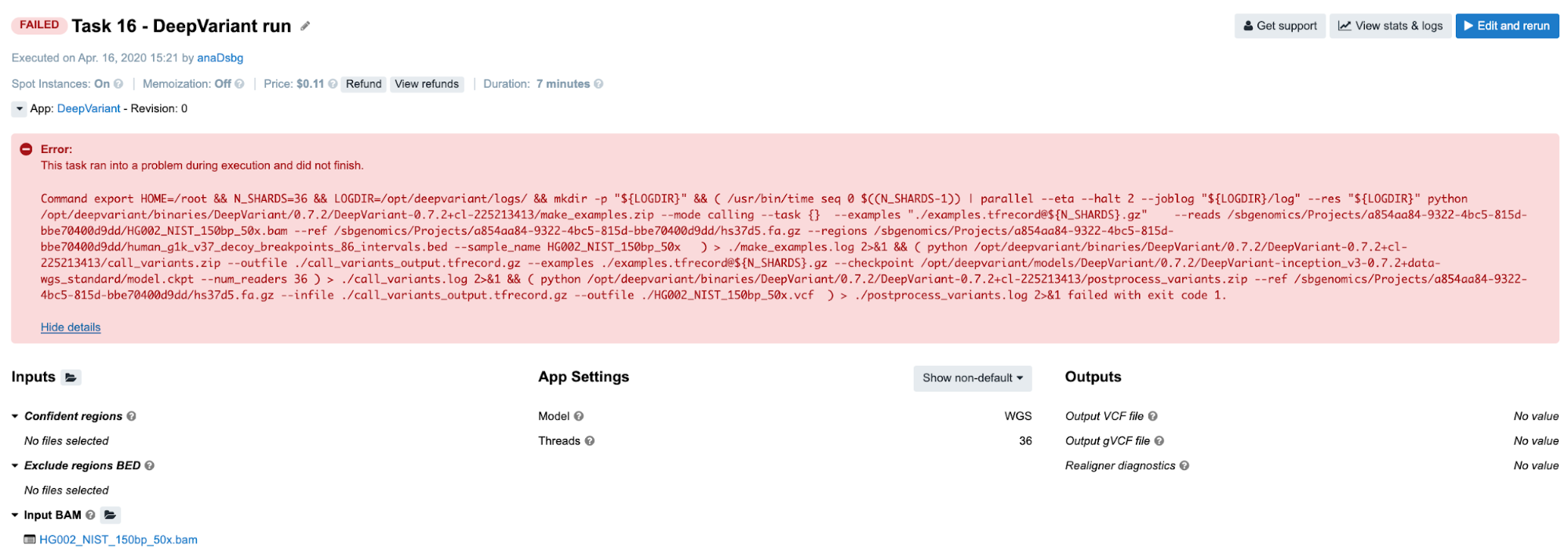

Task 16: Redirecting STDOUT to a file / Missing secondary files

Diagnosis:

-

The tool broke with a non-zero exit code, so we proceed to

job.err.logfile. -

The file is empty, but there is an additional log file

make_examples.logwhich contains both STDERR and STDOUT messages (we can confirm this from thecmd.logfile). We can get some hints related to the failure towards the end of the file:... ValueError: Not found: could not load fasta and/or fai for fasta /sbgenomics/Projects/0500a838-8897-4f04-ba14-8bd62f75bb5f/hs37d5.fa.gz ... -

Since it looks like there is a problem with FASTA file and its secondary file, we want to check out

"reference"input from thejob.jsonfile:... "reference" : { "checksum" : null, "class" : "File", "contents" : null, "dirname" : "/sbgenomics/Projects/0500a838-8897-4f04-ba14-8bd62f75bb5f", "location" : "/sbgenomics/Projects/0500a838-8897-4f04-ba14-8bd62f75bb5f/hs37d5.fa.gz", "metadata" : { }, "name" : "hs37d5.fa.gz", "path" : "/sbgenomics/Projects/0500a838-8897-4f04-ba14-8bd62f75bb5f/hs37d5.fa.gz", "secondaryFiles" : [ { "checksum" : null, "class" : "File", "contents" : null, "dirname" : "/sbgenomics/Projects/0500a838-8897-4f04-ba14-8bd62f75bb5f", "location" : "/sbgenomics/Projects/0500a838-8897-4f04-ba14-8bd62f75bb5f/hs37d5.fa.gz.fai", "metadata" : { }, "name" : "hs37d5.fa.gz.fai", "path" : "/sbgenomics/Projects/0500a838-8897-4f04-ba14-8bd62f75bb5f/hs37d5.fa.gz.fai", "size" : 2813 } ], "size" : 892384594 }, ...Well, it looks like both files are there – FASTA and its FAI index as a secondary file. So what is wrong then?

If you are working a lot with those kinds of files, you may have noticed that gzipped versions of FASTA and its index are almost never used by any bioinformatics tools. Hence, it may be worthwhile visiting the tool’s page and making sure that this is the right format to use.

-

After circling around DeepVariant GitHub for a few minutes, we learn that a

.fa.gz.gzifile is needed in addition to the.fa.gz.faiwhen gzipped versions are in use. Otherwise, when regular, uncompressed FASTA is provided, index.faiis sufficient. Note that public apps and workflows are designed such that index files are not provided explicitly on the inputs. Instead, those are automatically recognized if present in the project and fetched along with the file (e.g. FASTA) they are associated with.

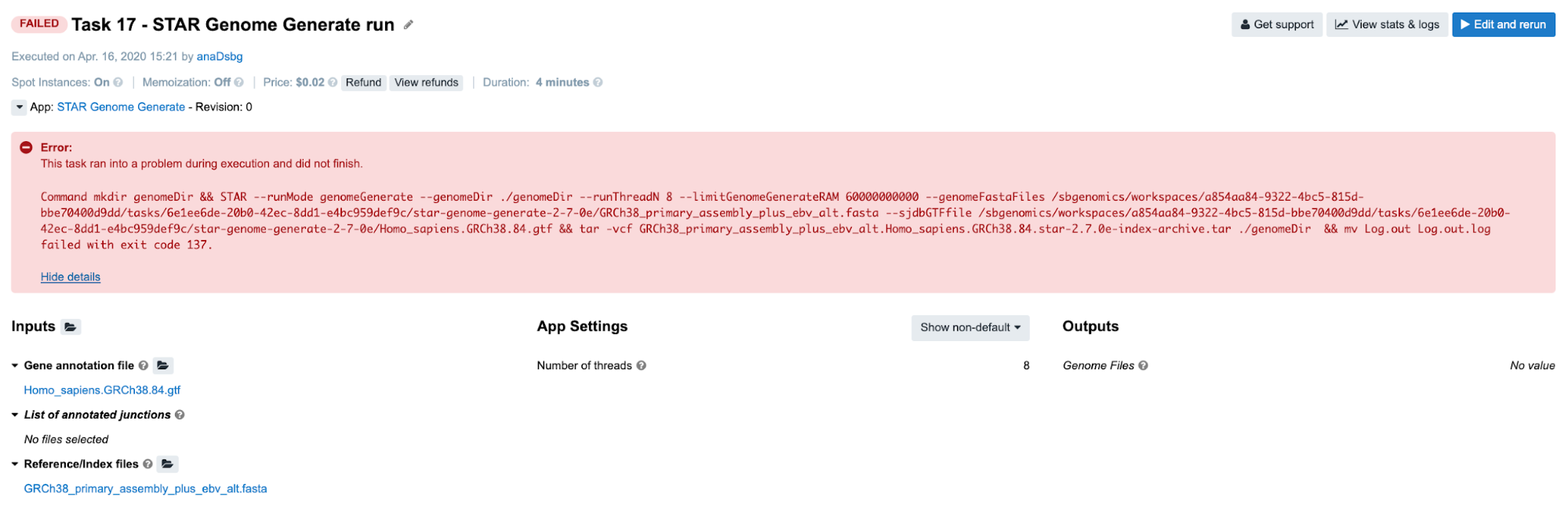

Task 17: Insufficient Memory

Diagnosis: As usual, we try to get some clues from the job.err.log file, since there is not much information on the task page. Here is what we read there:

2019-12-19T17:48:21.090915287Z Killed

Skimming through the Troubleshooting Cheat Sheet, the message that we see most likely falls under the D box. If we go through all the items listed within this category, we’ll eliminate all but the last one. For those who don’t have much experience with debugging, the exception message Killed is triggered by the kernel and it is always associated with resource exhaustion (e.g. memory/swap). So, the next time you spot such an exception message in the log files, you can start focusing on the memory issue immediately.

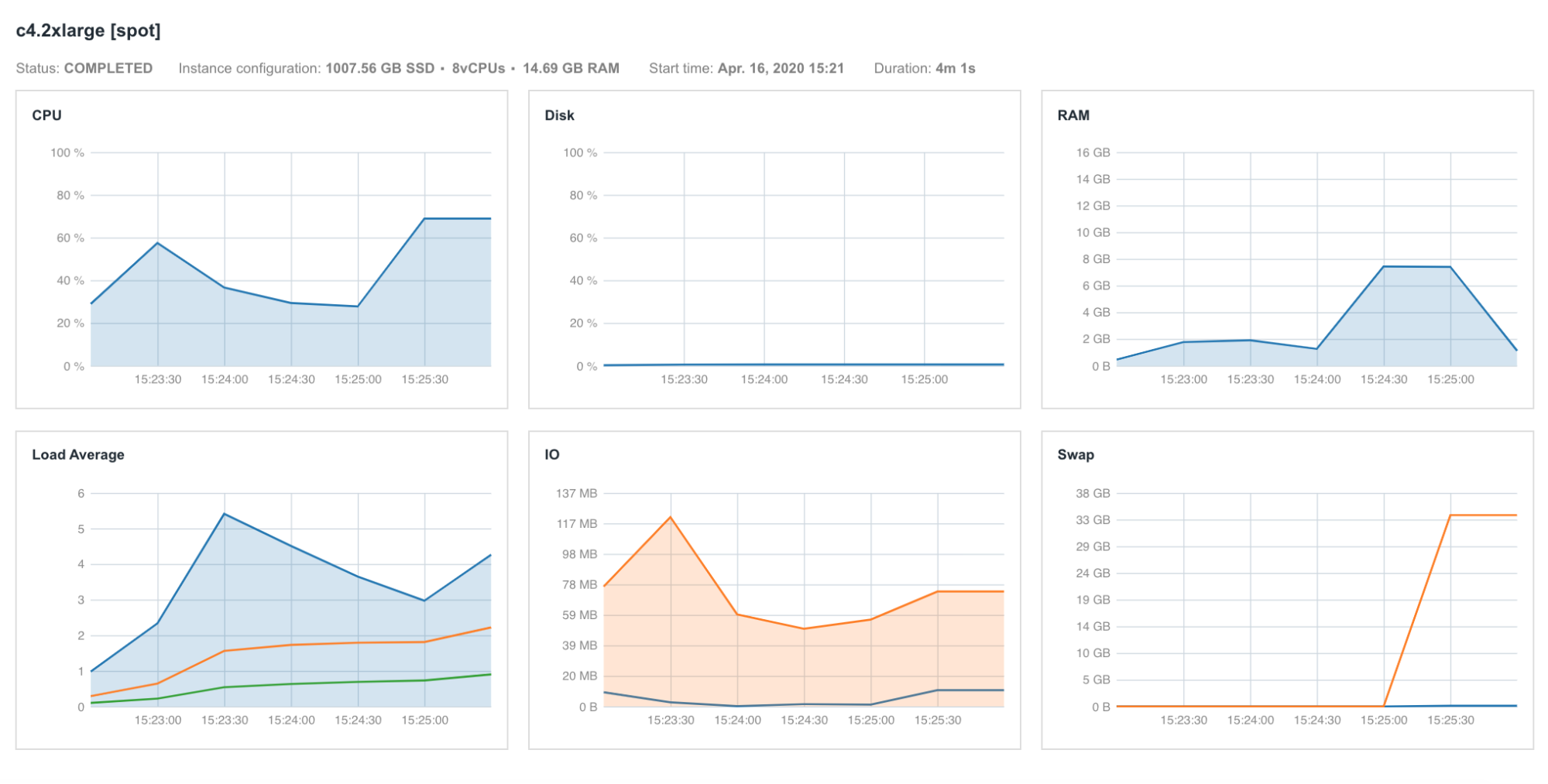

If we try to confirm this by looking at Instance metrics (Figure 12), we see that the RAM profile didn’t go anywhere near the maximum value and we may assume that the memory is not an issue. However, the Swap diagram below RAM profile shows that swap memory usage was extremely high, indicating that there was a significant demand for the memory that couldn’t be served by the system, so the tool initiated allocation of additional, virtual memory called swap.

In case you are wondering why we don’t see a peak in RAM diagram too, the reason for that might lay in the fact that our services don’t record values for each point in time. Instead, the usage is measured every 30 seconds, and that is why it is possible that certain glitches that occur quickly are missed. For example, if there were recorded data points for the interval between 15:24:30 and 15:25:00, we would have noticed that the RAM usage was very close to 16 GB.

In this particular case, we changed the memory requirement directly in the wrapper from 60,000 MB to 1,000 MB, just to showcase this error.

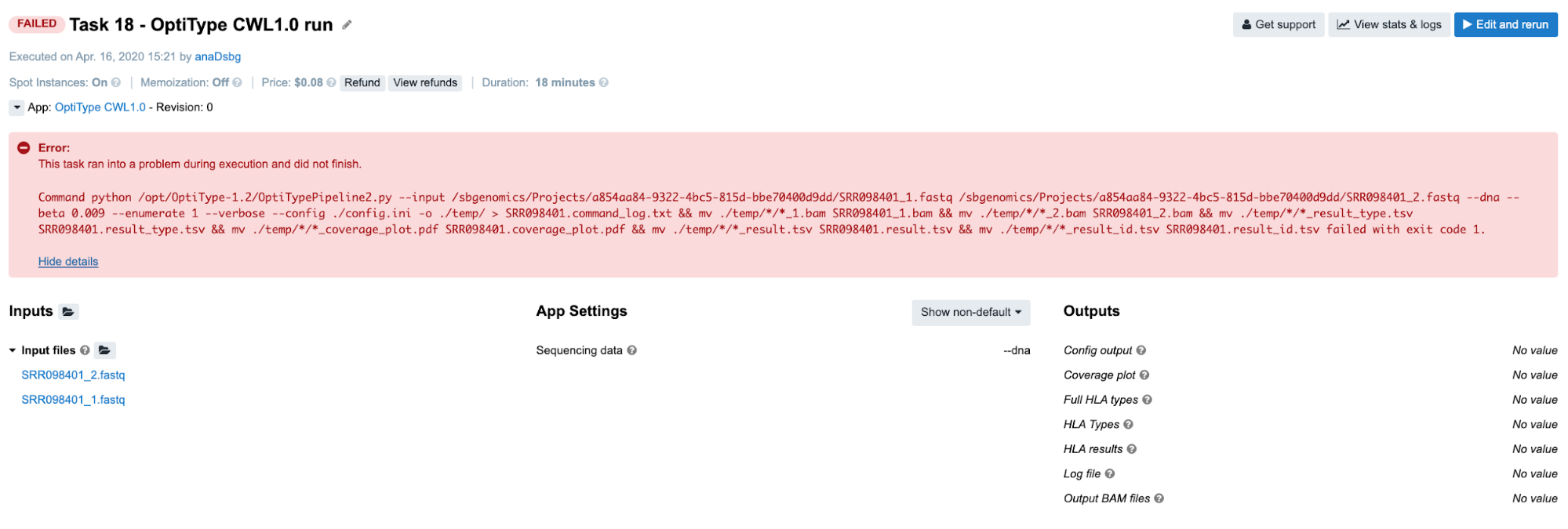

Task 18: Insufficient Memory

Diagnosis: Once more, we refer to the job.err.log content. The following are last few rows that are printed out:

...

2019-12-19T17:58:20.616060519Z Aborted (core dumped)

2019-12-19T17:58:20.907076315Z Traceback (most recent call last):

2019-12-19T17:58:20.907098242Z File "/opt/OptiType-1.2/OptiTypePipeline2.py", line 279, in

2019-12-19T17:58:20.908267039Z pos, read_details = ht.pysam_to_hdf(bam_paths[0])

2019-12-19T17:58:20.908281289Z File "/opt/OptiType-1.2/hlatyper.py", line 186, in pysam_to_hdf

2019-12-19T17:58:20.911066336Z sam = pysam.AlignmentFile(samfile, sam_or_bam)

2019-12-19T17:58:20.911073958Z File "pysam/calignmentfile.pyx", line 311, in pysam.calignmentfile.AlignmentFile.__cinit__ (pysam/calignmentfile.c:4929)

2019-12-19T17:58:20.911923318Z File "pysam/calignmentfile.pyx", line 480, in pysam.calignmentfile.AlignmentFile._open (pysam/calignmentfile.c:6905)

2019-12-19T17:58:20.911932456Z IOError: file `./temp/2019_12_19_17_50_50/2019_12_19_17_50_50_1.bam` not found

We see that the last message refers to a certain file that has been created during the run time and only temporarily stored (it was located in ./temp directory). This suggests that the error wasn’t caused by the files that we have directly provided on inputs, but that some other downstream process might have interrupted the tool execution. That doesn’t tell us much, but we could try to go through the suggestions found within the cheat sheet. However, we might as well spot one of the earlier messages which says Aborted (core dumped) and save us some time if we are familiar with this error. Similarly to a previous case where we encountered a Killed message, core dumped can too be an indicator that there was an issue with memory.

Indeed, if we rerun the task using an instance with more memory (e.g. c4.8xlarge), the tool will complete successfully. Even though the instance profiles don’t show this clearly (Figure 13), we need to keep in mind that the tools work in different ways. Sometimes, the tools will detect that there is not enough resources and simply break the execution with some of the abovementioned messages. In that case, there is no evidence of an overload on the diagrams, but further testing with bigger instance types may be a worthwhile approach.

killed and core dumped can help navigate the next steps in the debugging process and therefore we decide to test the tools with bigger instance types.

Unlike killed, core dumped can be related to other underlying issues that are usually associated with the program itself. For example, the Kallisto Index tool will throw this error when we try to index reference genomes which contain sequences smaller in length than the k-mer used by Kallisto (e.g. 31 bp). Even though we usually index genomes which contain billions of base pairs, such scenarios still can occur when we try to index certain types of viral or bacterial sequences which are very small.

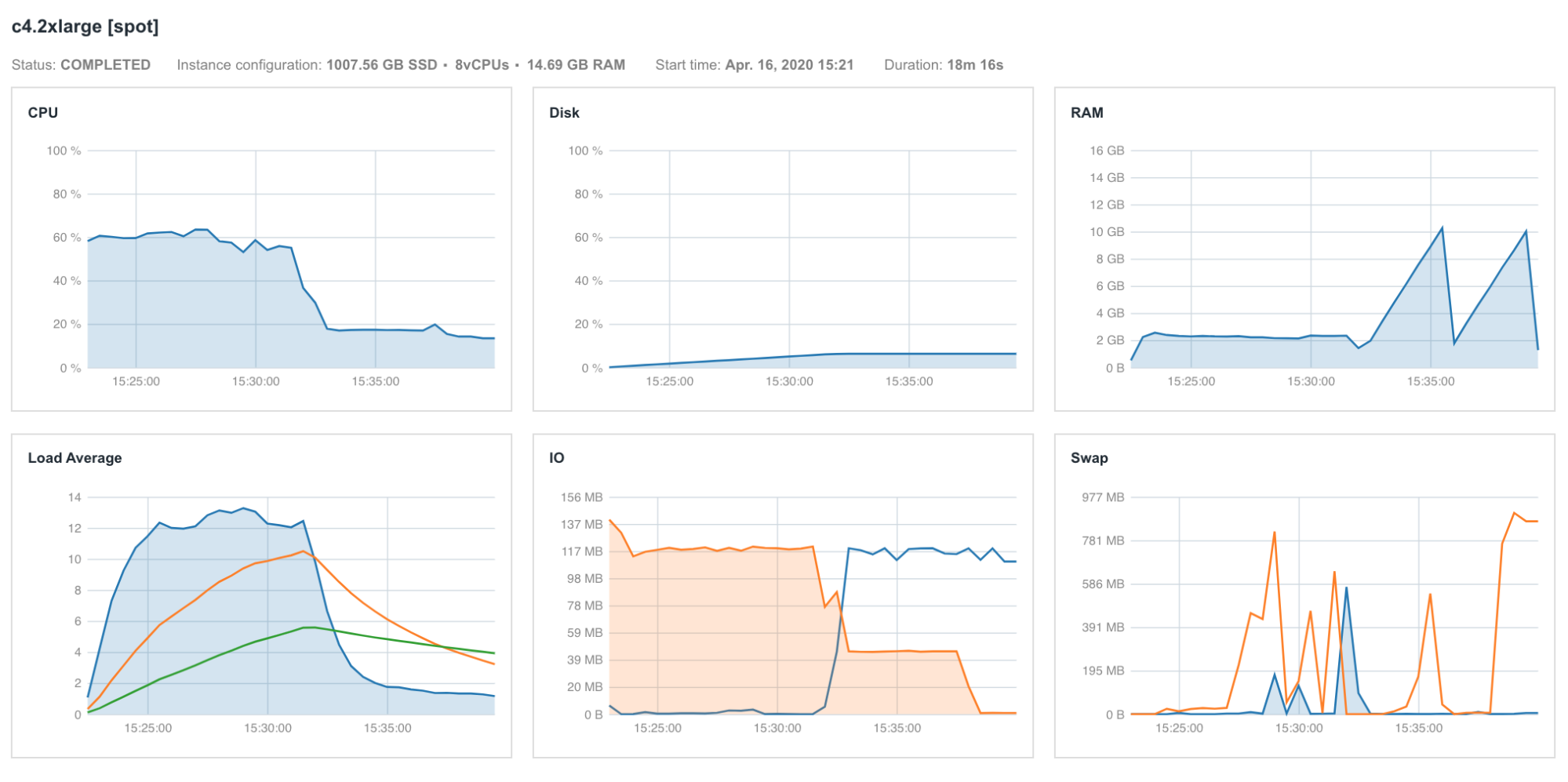

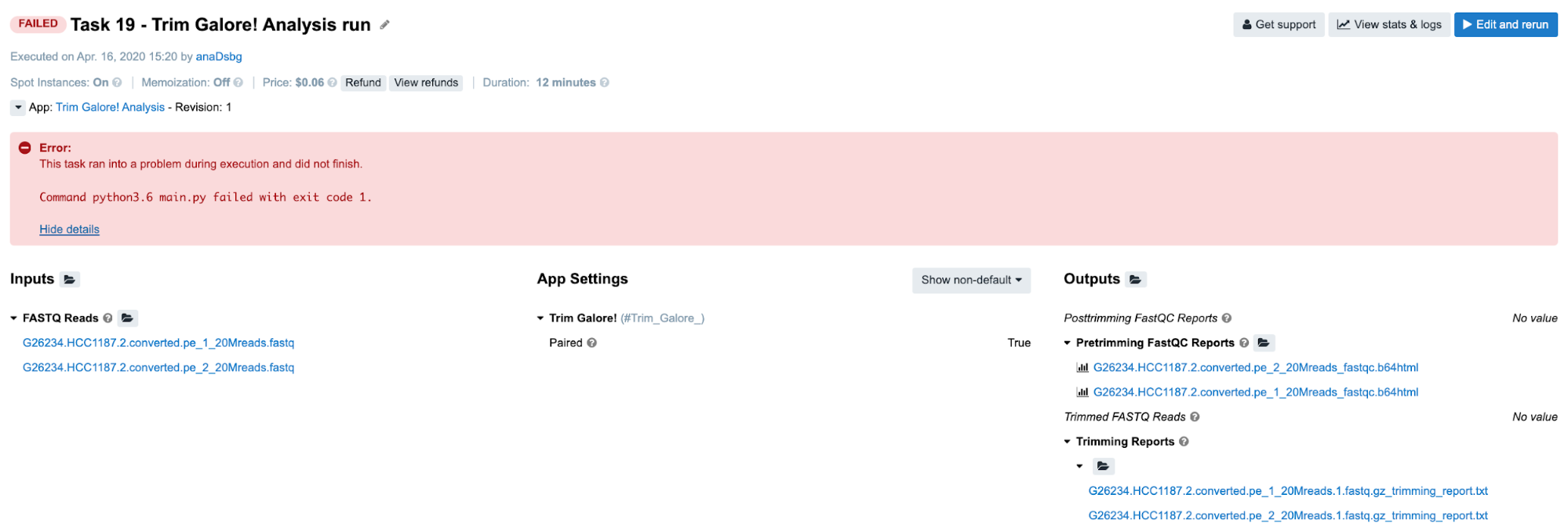

Task 19: Invalid output glob

Diagnosis:

-

Again, we are dealing with a non-specific message on the task page, hence we are heading to the View stats & logs panel.

-

There, we find out that the SBG_FASTQ_Merge app has failed and we check out its

job.err.logcontent:2019-12-19T17:52:16.079997001Z Traceback (most recent call last): 2019-12-19T17:52:16.080023406Z File "main.py", line 36, in 2019-12-19T17:52:16.080028176Z main() 2019-12-19T17:52:16.080031080Z File "main.py", line 25, in main 2019-12-19T17:52:16.080034124Z files_location = str(cwl.inputs['fastq'][0]['path']) 2019-12-19T17:52:16.080037063Z IndexError: list index out of range -

Similarly as in Task 15, we see a Python error message (last line) which is a strong indicator that we are providing objects which can’t be accessed in an expected way. In line with that, the row before the last one reveals that the

fastqinput should be a list with at least one element (so we can fetch it by callingcwl.inputs['fastq'][0])which has apathattribute. Hence, it’s likely that we are not providing a list at all, or there might be something wrong with the content of the list or its elements. So the first order of business is to check this. -

To examine the inputs, we can either use

job.jsonfrom SBG_FASTQ_Merge orcwl.output.jsonof the preceding app. When there is only onecwl.output.jsonfile for the preceding tool (i.e. it’s not scattered), it is much more practical to check this file rather thanjob.jsonwhich contains a lot of other information that we are not interested in now (e.g. parameters, resource details etc.). Here is what we find:{"flat": []} -

After we are aware that an empty list went out of the SBG_Flatten app, we trace back to the Trim_Galore jobs which provided inputs for the SBG_Flatten. There, we follow the same strategy and check out the content of

cwl.output.json. Here is what we find in one of the files:{ "fastqc_report_html" : [ ], "fastqc_report_zip" : [ ], "trimmed_reads" : [ ], "trimming_report" : [ { "checksum" : "sha1$26187e89e00fb1444436c04ae0ea6c536fedc4b9", "class" : "File", "dirname" : null, "location" : null, "metadata" : null, "name" : "G26234.HCC1187.2.converted.pe_1_20Mreads.1.fastq.gz_trimming_report.txt", "path" : "/sbgenomics/workspaces/0500a838-8897-4f04-ba14-8bd62f75bb5f/tasks/8cb6bfe5-061b-4e3f-904f-8323ec44d55a/Trim_Galore__1_s/G26234.HCC1187.2.converted.pe_1_20Mreads.1.fastq.gz_trimming_report.txt", "size" : 3809 }, { "checksum" : "sha1$9dd3e99d7ea6b209eb817df6abd7c799d938e99f", "class" : "File", "dirname" : null, "location" : null, "metadata" : null, "name" : "G26234.HCC1187.2.converted.pe_2_20Mreads.1.fastq.gz_trimming_report.txt", "path" : "/sbgenomics/workspaces/0500a838-8897-4f04-ba14-8bd62f75bb5f/tasks/8cb6bfe5-061b-4e3f-904f-8323ec44d55a/Trim_Galore__1_s/G26234.HCC1187.2.converted.pe_2_20Mreads.1.fastq.gz_trimming_report.txt", "size" : 3864 } ], "unpaired_reads" : [ ] } -

Apparently, the output of interest,

trimmed_reads, contains an empty list. However, tracing back further doesn’t make sense any more, since TrimGalore! seems to be working: there are 8 jobs and all of these lasted for a certain amount of time and completed successfully. Therefore, we assume that there might be some product of this work, but we may have missed it somehow. To check this hypothesis, we have another resource among log files which gives us a snapshot of the working directory after the job has completed:job.tree.log. There, we can look for the files that are missing from our output:. ├── [-rw-r--r-- 370 Dec 19 5:46.26 UTC] cmd.log ├── [-rw-r--r-- 1.1K Dec 19 5:51.20 UTC] cwl.output.json ├── [-rw-r--r-- 3.7K Dec 19 5:48.05 UTC] G26234.HCC1187.2.converted.pe_1_20Mreads.1.fastq.gz_trimming_report.txt ├── [-rw-r--r-- 148M Dec 19 5:51.15 UTC] G26234.HCC1187.2.converted.pe_1_20Mreads.1_val_1.fq.gz ├── [-rw-r--r-- 3.8K Dec 19 5:51.16 UTC] G26234.HCC1187.2.converted.pe_2_20Mreads.1.fastq.gz_trimming_report.txt ├── [-rw-r--r-- 151M Dec 19 5:51.15 UTC] G26234.HCC1187.2.converted.pe_2_20Mreads.1_val_2.fq.gz ├── [-rw-r--r-- 21K Dec 19 5:51.17 UTC] job.err.log ├── [-rw-r--r-- 13K Dec 19 5:46.26 UTC] job.json └── [-rw-r--r-- 0 Dec 19 5:51.20 UTC] job.tree.log 299M used in 0 directories, 9 files -

The two

fq.gzfiles that we have expected to see on our output are indeed listed here. This immediately suggests that there might be a problem with the glob expression on this particular output. We can inspect this either by opening TrimGalore! in the app editor, or by checking thejob.jsonfile for any of the jobs. Finally, we confirm that the glob is invalid and has the following value:*fastq. This means that we falsely assumed thefastqextension for the trimmed output reads instead offq.gz.

Although a bit lengthy, this example uniquely demonstrates the value of almost all available log files. It also shows certain aspects of the decision-making process during debugging, which usually improves after a while and can save us a lot of time when done properly.

Closing Remarks

After reading this guide, you now have the know-how and confidence to resolve many different issues that you may encounter when working on BioData Catalyst powered by Seven Bridges. These example tasks represent the most common issues and their corresponding debugging directions that the Seven Bridges team has encountered through extensive work with various bioinformatics applications, but is by no means comprehensive: as datasets, tools, and workflows are constantly changing, it would be near-impossible to account for every possible error. However, this guide is not the only helpful tool at your disposal! Are you just getting started on BioData Catalyst powered by Seven Bridges, and need help setting up a project? See our comprehensive tips for reliable and efficient analysis set-up. If you need more information on a particular subject, our Knowledge Center has additional information on all of the features of the Platform. Really stuck on a tough technical issue, or encounter a stubborn bug in your workflow that just won’t go away? Contact our Support Team, and they will assist as soon as possible!